Report to Congressional Requesters

United States Government Accountability Office

A report to congressional requesters.

For more information, contact: James R. McTigue at McTigueJ@gao.gov.

What GAO Found

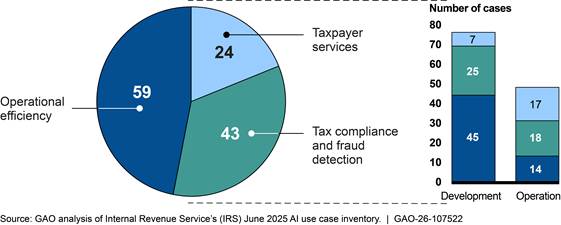

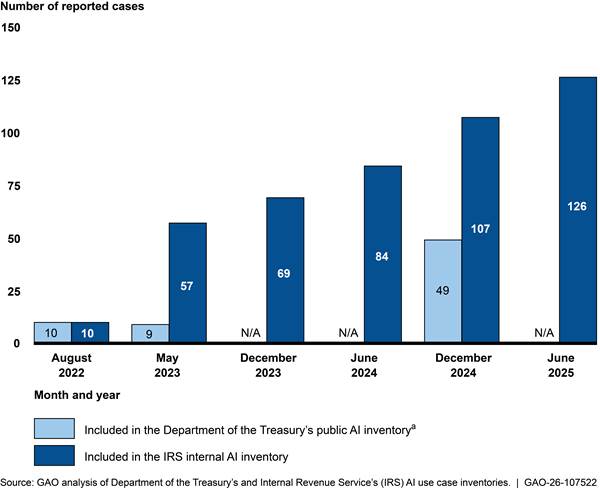

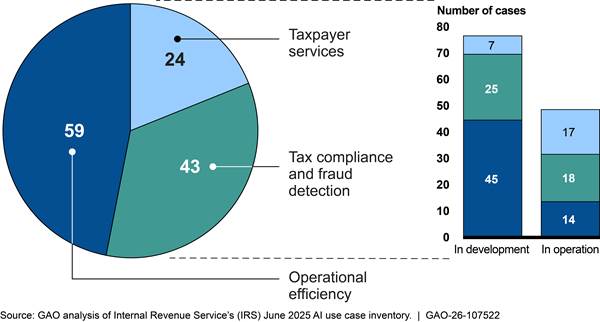

IRS had 126 active artificial intelligence (AI) use cases—applications of AI for a particular business need—in its inventory as of June 2025. These 126 use cases included 65 that were either too sensitive for public reporting or were research and development efforts exempt from public reporting. Although IRS has been using AI for several years, its inventory has grown rapidly since reporting 10 use cases in August 2022. IRS categorized most use cases in the June 2025 inventory as either improving (1) operational efficiency or (2) tax compliance and fraud detection. IRS listed 61 percent (77 of 126) of use cases as in development in June 2025 (see figure).

Major staffing reductions at IRS in 2025 could greatly affect its ability to use AI. For example, officials in the Research, Applied Analytics and Statistics group said they lost 63 employees who had been working full- or part-time on AI. Other IRS units also reported reductions in staff that support AI efforts, in addition to organizational and contractual changes. Still, IRS officials stated that the agency plans to use more AI in the future. However, IRS officials said they had not identified skills needed to support AI or developed a plan to address the skills gaps. The recent staff reductions, the intent to pursue additional AI initiatives, and the absence of a plan to address AI skills gaps increase the risk that IRS AI efforts will not succeed.

In addition, IRS’s inventory did not always include quality information. For example, GAO determined that over 25 percent of use cases did not include information on how the use case was to benefit the agency. GAO also identified use case inventory omissions. For example, GAO identified several AI-enabled tools IRS officials said were contracted to help build criminal cases. These tools were not included in the inventory. Improved IRS processes and internal communications can address these shortcomings.

IRS’s AI governance process had several entities with oversight of individual AI use cases. However, none were responsible for managing AI investments across the agency. Further, IRS does not have a process to ensure its AI investments are contributing to agency-wide goals. Given the risks facing IRS, a more strategic approach is warranted that enables IRS to identify high-value AI initiatives that contribute to agency-wide goals.

Why GAO Did This Study

IRS has used AI for many years. It has numerous AI initiatives under development and in operation, including in areas such as taxpayer service and audit selection. However, future IRS funding, strategy, and staffing levels are uncertain. This dynamic environment highlights the importance of understanding how AI can deliver results for IRS.

GAO was asked to review IRS’s use of AI. This report assesses (1) how IRS uses AI and how resource changes at IRS could affect AI efforts; (2) the quality of information in IRS’s AI inventory; and (3) how IRS strategically manages its AI investments.

GAO reviewed IRS’s internal and public AI inventories, and relevant Department of the Treasury and IRS documents. GAO compared information in and processes for managing IRS’s AI inventory to IRS policy and guidance, law, government-wide guidance, and leading practices. In addition, GAO compared IRS’s efforts to manage its AI investments against federal guidance and leading practices. GAO also interviewed Treasury and IRS officials.

What GAO Recommends

GAO is making eight recommendations to IRS, including to (1) identify skills gaps and develop an AI workforce plan; (2) implement a comprehensive quality assurance process for AI inventory entries; (3) clarify internal communications to ensure all AI use cases are included in the inventory; and (4) require reporting on use case alignment to strategic goals.

IRS agreed with all eight of GAO’s recommendations and described steps it plans to take, or has started taking, in response to each recommendation. IRS also provided technical comments, which we incorporated as appropriate.

|

Abbreviations |

|

|

|

|

|

CIO |

Chief Information Officer |

|

COTS |

commercial-off-the-shelf |

|

CTO |

Chief Technology Officer |

|

EO |

executive order |

|

FAR |

Federal Acquisition Regulation |

|

FedRAMP |

Federal Risk and Authorization Management Program |

|

GSA |

General Services Administration |

|

HCO |

Human Capital Office |

|

IRA |

Inflation Reduction Act |

|

IRS |

Internal Revenue Service |

|

OMB |

Office of Management and Budget |

|

Procurement |

Office of the Chief Procurement Officer |

|

RAAS |

Research, Applied Analytics and Statistics |

This is a work of the U.S. government and is not subject to copyright protection in the United States. The published product may be reproduced and distributed in its entirety without further permission from GAO. However, because this work may contain copyrighted images or other material, permission from the copyright holder may be necessary if you wish to reproduce this material separately.

March 24, 2026

The Honorable Terri Sewell

Ranking Member

Subcommittee on Oversight

Committee on Ways and Means

House of Representatives

The Honorable Linda T. Sanchez

House of Representatives

The use of AI has led to significant advancements, such as the automated detection of potential fraud, and holds promise for increasing the effectiveness and efficiency of government operations. At the same time, AI poses unique challenges for oversight because it is an emerging technology and its inputs and operations are not always transparent. While the Internal Revenue Service (IRS) has been using AI for many years, its use of AI has recently increased for activities such as answering taxpayer questions and helping select organizations to audit. We previously reported on several IRS applications of AI and made recommendations to improve their design, documentation, and effectiveness.[1]

Increased use of AI has been a goal at IRS for the past few years, although there have been fluctuations in IRS’s resources and priorities. Specifically, in August 2022, the Inflation Reduction Act (IRA) provided IRS approximately $79.4 billion in multiyear funding through fiscal year 2031.[2] In April 2023, IRS published a strategic plan to guide its investments under the IRA.[3] This plan and IRS’s 2024 update to the strategic plan cited AI technologies as key components of IRS’s plans to modernize operations to become a “digital-first agency” and narrow the tax gap.[4]

Subsequent laws have rescinded or prevented IRS from spending about two-thirds of the amount provided to it in the IRA, reducing the amount IRS can spend to approximately $25.9 billion.[5] In addition, the President’s budget proposal for fiscal year 2026 recommended a further recission of $16.5 billion of IRA funds as well as an approximately 20 percent decrease in annual appropriations from fiscal year 2025.[6] Nonetheless, officials from the Department of the Treasury and IRS continue to cite AI technologies as tools that can help increase efficiency and better serve taxpayers. Uncertainty around future IRS funding highlight the importance of understanding how the agency is using AI to achieve its goals.

You asked us to review IRS’s use of AI. This report assesses (1) how IRS uses AI and how resource changes at IRS could affect AI efforts, (2) the quality of information in IRS’s AI inventory, and (3) how IRS strategically manages its existing and planned use cases to identify potential cost savings and achieve agencywide goals.

To address our first objective, we analyzed IRS’s AI inventories from 2022 through 2025 to describe what AI IRS uses and how its inventory has changed over time.[7] We used IRS’s June 2025 internal AI inventory to describe summary statistics and other information, such as dates when use cases were initiated, the number of use cases in development and in operation, and examples of specific use cases. We also interviewed IRS AI governance officials about how the inventories were created and how they changed over time. We interviewed Treasury’s Chief Information Officer and IRS officials in several business units about topics such as the effects of changes to agency resources, priorities, or plans related to the use of AI, and workforce planning. We also reviewed supporting documentation. We compared information to IRS policy on workforce planning, key principles for workforce planning, and relevant aspects of Office of Management and Budget (OMB) guidance.[8]

To address our second objective, we reviewed the quality of IRS’s June 2025 AI inventory by assessing for elements of accuracy and completeness.[9] We reviewed IRS’s policies and processes for developing an accurate and complete AI inventory.[10] We also reviewed relevant federal law, executive orders (EO), and government-wide guidance on creating and maintaining AI inventories.[11] We conducted data reliability testing on IRS’s AI inventories, including for completion of required fields and consistency between dates. We also looked for possible missing entries from the AI inventory by reviewing documentation and interviewing officials responsible for the potentially missing use cases. We compared quality issues we identified to federal law and guidance, an EO, IRS policy and guidance, standards for internal control, and leading practices for AI accountability.[12]

To address our third objective, we reviewed IRS’s June 2025 inventory to identify examples of potential overlap or duplication in use cases. We interviewed officials responsible for or associated with those use cases to gain further insight. We also reviewed documentation about IRS strategic goals, objectives, and priorities. We assessed IRS AI policy and guidance to determine the extent to which they require collecting information related to AI strategic goals and outcomes. We compared IRS’s efforts for managing AI to OMB guidance and relevant aspects of leading practices for collaboration identified in our prior work.[13]

See appendix I for more information on our objectives, scope, and methodology.

We conducted this performance audit from April 2024 to March 2026 in accordance with generally accepted government auditing standards. Those standards require that we plan and perform the audit to obtain sufficient, appropriate evidence to provide a reasonable basis for our findings and conclusions based on our audit objectives. We believe that the evidence obtained provides a reasonable basis for our findings and conclusions based on our audit objectives.

Background

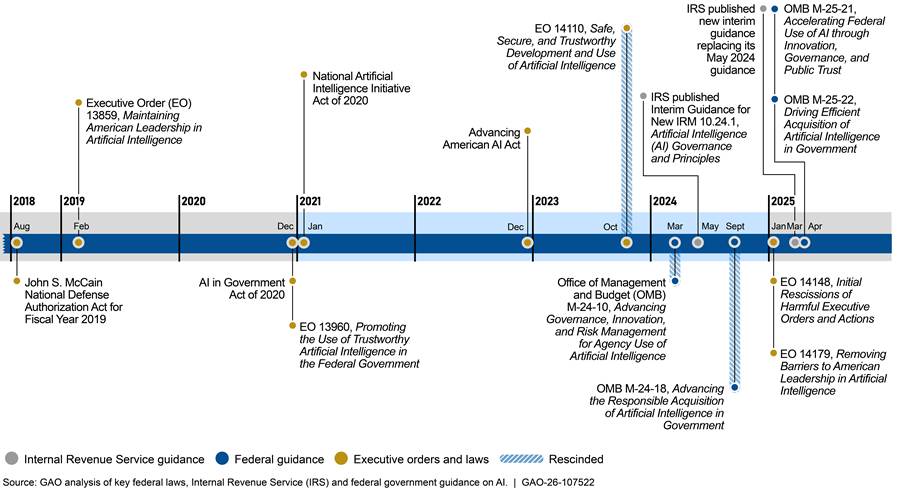

Evolution of Federal and IRS Requirements and Guidance on AI

Several administrations have issued EOs and Congress has passed legislation to guide and govern AI in the federal government. In response, IRS has developed guidance around its own use of AI. Figure 1 provides a timeline of key federal laws, EOs, and federal and IRS guidance from recent years.[14]

Figure 1: Timeline of Key Federal and IRS Requirements and Guidance on Government Use of AI as of June 2025

These laws, EOs, and guidance provide key information and include various requirements for federal agencies on government use of AI to ensure its use is trustworthy and transparent, among other goals.

· The John S. McCain National Defense Authorization Act for Fiscal Year 2019 established a definition of AI that OMB and IRS use in guidance.[15] According to IRS officials, the definition of “AI” is a moving target that the agency is closely tracking, because different government-wide guidance has used different definitions. However, the latest government-wide guidance on agency use of AI also used this 2019 definition.[16]

· EO 13960, Promoting the Use of Trustworthy Artificial Intelligence in the Federal Government, established principles for agency use of AI and introduced the first requirement for agencies to publicly report inventories.[17] This EO was signed in December 2020. Two years later, the Advancing American AI Act codified the requirement for agencies to develop AI inventories.[18] As a component of Treasury, IRS’s AI inventory is published as part of Treasury’s AI inventory.

· EO 14110, Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, was issued in October 2023 and rescinded in January 2025.[19] The EO and related OMB memorandums had directed agencies to establish AI policies.[20] In response, IRS published interim policies for the agency’s implementation, use, and governance of AI in May 2024.

· EO 14179, Removing Barriers to American Leadership in Artificial Intelligence, was issued in January 2025. It directed OMB to revise its government-wide AI guidance established under EO 14110 as necessary to make it consistent with EO 14179.[21] As a result, in March 2025 IRS issued a new interim AI policy while awaiting updated guidance from OMB.[22]

· In April 2025, OMB published memorandums M-25-21 and M-25-22 to guide agencies’ use of AI in accordance with EO 14179.[23] These memorandums rescinded and replaced the previous versions related to EO 14110 and required agencies to update policies related to AI governance and acquisitions by December 2025. Agencies have until April 2026 to implement certain governance policies. In February 2026, IRS updated its AI governance policy which integrates OMB and Treasury guidance.[24]

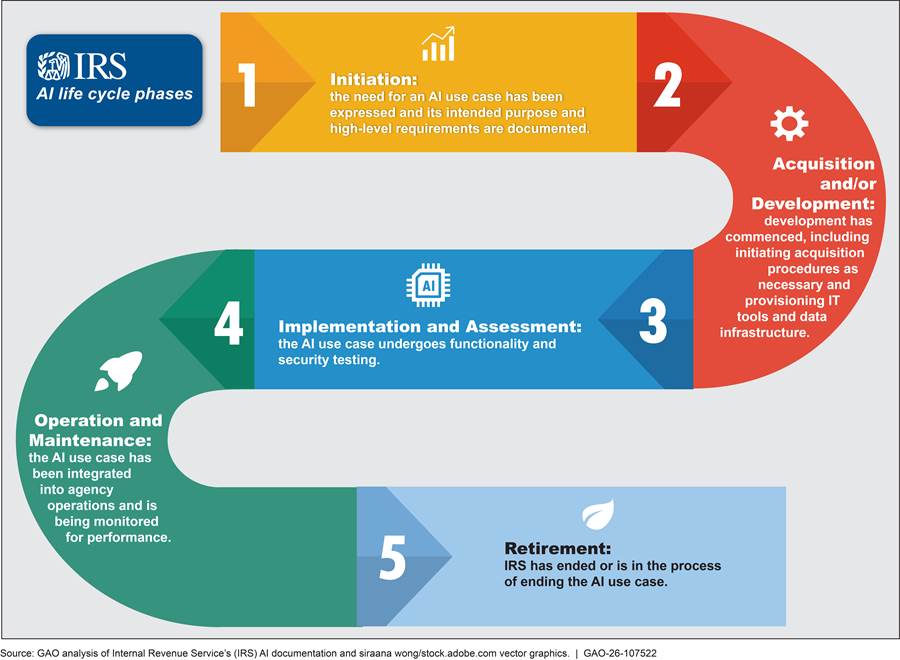

IRS Definition of a Use Case and the AI Life Cycle

IRS defines an AI use case as the application of an AI technique to meet a particular business need, such as to solve a problem or increase operational efficiency. A single use case may have multiple AI models and use multiple datasets to achieve its objectives. Use case owners are leaders in business units across IRS who are responsible for AI use cases’ operations and mission outcomes. AI project teams are staff in the business unit or units responsible for developing the use case, including program staff and technical team members. Throughout this report, we use the phrase use case owners to refer to both those responsible for a use case and the teams working on it.

As of June 2025, IRS guidance broke down AI development and use into five phases, in alignment with OMB guidance.[25] This is referred to as the AI life cycle. Figure 2 defines each of these phases. This report focuses on use cases listed as “active,” which includes AI in any stage except retirement.

Types of Costs Related to AI

According to IRS officials, AI expenditures can be difficult to track because they include direct costs that may be traceable to one or more individual use cases and indirect costs that are not captured at the use case level. Direct costs, such as salaries for AI experts, data analysts, and contractors to build an AI model, could be attributed to a specific use case. Contracts may also be used to purchase commercial tools or custom solutions.

Indirect costs, such as IT platforms that have the capacity to process large volumes of data or improve AI performance and reliability, may not be linked to one specific use case but are necessary for IRS to operate AI tools. In addition, IRS funds administrative activities related to its AI efforts, such as governance and procurement oversight to ensure compliance with applicable requirements. See appendix II for more information on types of costs associated with IRS’s use of AI.

IRS has previously estimated that it spends tens of millions annually on AI. For example, in September 2024, IRS estimated that the two business units most heavily involved with AI—IT and the Research, Applied Analytics and Statistics unit (RAAS)—spent over $58 million on direct and indirect AI costs in fiscal year 2024. In September 2025, RAAS estimated it spent over $28 million on AI in fiscal year 2025 and would spend an additional $32 million in fiscal year 2026.[26] IRS reported that these estimates included existing contractor, software, or hardware investments. However, they did not include costs such as ongoing maintenance for use cases or certain staff costs. According to IRS, those staff costs could add over 30 percent to the stated estimates.

Skills Gaps Could Substantially Affect IRS’s Ability to Continue Expanding Its Wide-Ranging AI Use

IRS Recently Began Expanding Its Use of AI

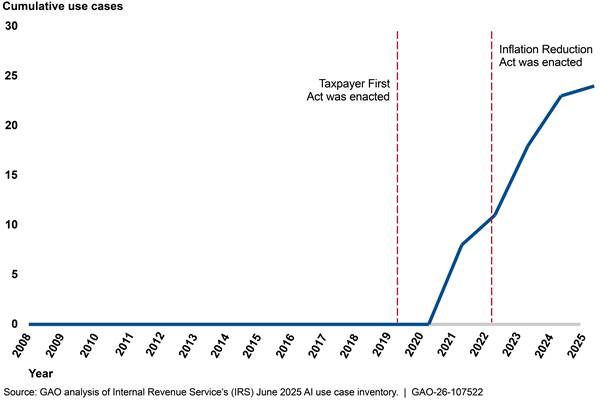

IRS’s June 2025 AI use case inventory had 126 use cases, a substantial increase from the 10 reported in 2022 (see fig. 3).[27] After first establishing a list of use cases to comply with government-wide public reporting requirements in August 2022, IRS created an internal inventory to track AI use across the agency.[28] IRS’s public inventory has been published once per calendar year since 2022 as a part of Treasury’s AI inventory reporting.[29]

Note: The number of public and internal use cases for a given year reflect the number of use cases listed in those inventories at each point in time. In some cases, IRS later determined that a “use case” did not involve AI technologies or comprised several individual use cases and therefore removed that entry from the inventory or created separate entries, respectively. When an inventory contained active, closed, and dormant use cases, we reported the total number of active use cases.

aPublic reporting has only been required once per calendar year since 2022, in varying months responsive to government-wide guidance. IRS reports its public AI inventories through Treasury. For inventories where public reporting is marked N/A, Treasury was not required to publish inventories at that time. Government-wide inventory requirements permitted some AI to be withheld from public reporting, such as AI the agency deemed sensitive or certain AI being used for research and development.

IRS’s internal inventory includes more use cases than those published as a part of Treasury’s public inventory because agencies are permitted to withhold some use cases from public reporting. For example, agencies can exclude some common commercial products, use cases the agency deems sensitive, or use in certain research and development efforts.[30]

As a result, IRS publicly reported 49 use cases in Treasury’s December 2024 inventory, while its internal inventory included 107 use cases.[31] As of June 2025, IRS’s internal inventory included 65 use cases that were marked sensitive or non-reportable research projects and 61 that could be made available in subsequent public inventory reporting.[32] Numbers in this report reflect the number of AI use cases in IRS’s internal inventory, regardless of whether they are marked as sensitive or research projects.

Changes in IRS’s inventory over time can generally be attributed to four factors:

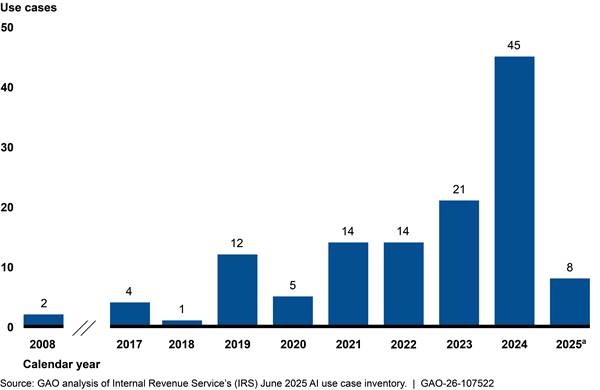

· Increasing use of AI. Two of IRS’s AI use cases date back to 2008, but most were initiated within the past 3 years (see fig. 4). Specifically, IRS initiated 84 of 126 use cases between June 2022 and June 2025, meaning IRS began to allocate substantially more resources toward developing AI in recent years. According to IRS officials, this increase in the number of AI use cases correlated with AI capabilities maturing to general usability and becoming more available for federal use.

aNumber of 2025 use cases is based on the number of use cases reported to the AI inventory by June 2025. As of June 2025, IRS had not put out agencywide calls for updates to its AI inventory in 2025, including requesting submission of any new AI use cases.

· Identifying existing AI. There was often a lag between IRS initiating a use case and when it was added to IRS’s AI inventory. For example, 43 AI use cases were initiated before August 2022—when IRS first publicly reported an AI inventory—but did not appear in IRS’s internal inventory until 2023 or later. An additional 27 use cases were added to the inventory several months after they were initiated, and 11 took 1 to 2 years or longer to be reported. For example, in November 2022, IRS began work on a use case that identifies users of IRS systems that might be possible insider threats. That use case did not appear in IRS’s AI inventory until June 2025. According to officials, some delays in use cases being listed in the inventory occurred because requirements for which technologies to include in the inventory changed over time and it took time to communicate AI inventory requirements within the agency.

· Discontinuing or mislabeling projects. Over time, IRS determined that some AI use cases would not continue or that a particular entry in the inventory did not actually involve AI. In some instances, entries were deleted because they were mistakenly labeled as AI. In others, the AI had not been fully developed or put into operation. In June 2025, nine AI use cases were labeled as permanently retired and 14 were labeled as temporarily paused with possibility for future use. Those numbers are potentially understated given resource and other changes at the agency in 2025, as discussed below.[33]

· Changing federal requirements. According to IRS officials, some use cases may have been added or removed from the AI inventory over time due to changes in government-wide guidance. For example, government-wide guidance on compiling annual AI inventories required agencies to include all regression models regardless of complexity in 2023 but not in 2024.

Most IRS AI Use Cases Focused on Operational Efficiencies, Tax Compliance, and Fraud Detection

As of June 2025, about 100 of IRS’s 126 AI use cases were aimed at improving efficiency in IRS’s internal operations or addressing tax compliance and detecting fraud.[34] About one-third of these use cases were operational, with the rest in earlier development phases.[35] IRS had the fewest AI use cases focused on taxpayer services, but over half of them were already in operation in June 2025 (see fig. 5).

Note: Use cases labeled as “in development” are those listed as being in the following AI life cycle stages in June 2025: initiation, acquisition and/or development, and implementation and assessment. Use cases labeled as “in operation” are those listed as being in the operation and maintenance stage.

Operational efficiency. The number of AI use cases focused on operational efficiencies exponentially increased between 2017 and 2025. In 2017, IRS had three use cases in this area. That number rose to 23 in 2023 and to 59 in 2025, correlating with the increase in funds IRS received through the Inflation Reduction Act.

We identified seven general focus areas of operational efficiency use cases. Table 1 describes those focus areas, the number of use cases in development and in operation in each focus area, and relevant examples.

Table 1: Focus Areas and Examples of IRS AI Use Cases Focused on Operational Efficiencies, as of June 2025

|

Focus area |

Use cases in developmenta |

Use cases in operation |

Examples |

|

Automation Conducts processes that would take a human longer to complete. These types of AI use cases are generally commercial productivity tools or other automation tools performing tasks such as transcription, translation, systematically reviewing documents, or analyzing public information. |

15 |

3 |

Commercial productivity tools. Some IRS officials have access to tools such as AI-enabled meeting summarization in Microsoft Teams. Other automation tools. Several use cases are aimed at helping IRS systematically review technical documents to achieve particular goals. For example, the Office of the Chief Procurement Officer has a tool that helps review contract records for compliance with policy and transparency requirements. A different business unit is developing a use case to help IRS more quickly review resumes and identify qualified job candidates. |

|

Internal chatbots for administrative or mission support Answers administrative or mission-related employee questions. |

6 |

3 |

Administrative. Two chatbots are designed to answer IRS employee questions about IT topics. Another answers employee questions about employment benefits and other topics handled by IRS’s Employee Resource Center. Mission. The Criminal Investigations unit has a chatbot that officials can use to learn more about cryptocurrency transactions. The goal of the chatbot is to provide officials with information that could help speed up the investigative process. |

|

Data insights Analyzes administrative or mission-related data to improve processes or inform future decisions. |

6 |

3 |

Administrative. One of IRS’s oldest use cases is designed to leverage past employee attrition data to help predict future staffing needs. Mission. One AI use case helps analyze past Independent Office of Appeals cases, with the goal of improving future examinations and appeals outcomes. |

|

IT modernization Analyzes or assists with rewriting or testing computer code to further IRS IT modernization goals. |

6 |

1 |

IRS has several use cases designed to read IRS’s legacy computer code, describe how the code functions, and draft code in modern computer language to replace it. One of these applications focuses on IRS’s Business Master File, which uses a 60-year-old coding language. According to IRS, these use cases will reduce the number of staff needed with skills in old coding languages and help IRS modernize the agency’s systems up to years sooner than without AI tools. |

|

IT system security Analyzes data or completes tasks to improve cybersecurity or help IRS identify potential insider threats. |

5 |

3 |

Cybersecurity. One use case monitors access to one of IRS’s data warehouses that contains sensitive taxpayer information. The use case presents a dashboard with information such as the risk level of individual user activity and trends over time. Insider threats. Two use cases monitor internal user activity in IRS computer systems to identify anomalous or suspicious behavior. For each use case, IRS officials review the activity flagged by the AI to determine if further investigation is needed. |

|

Digitalization Extracts data from scanned paper tax forms. |

4 |

0 |

These use cases involve extracting data from tax forms submitted on paper. According to IRS, these tools will help it achieve goals such as modernization (e.g., making tax data available digitally for other business processes) or saving money on manual transcription and storage. |

|

Workload prioritization Reviews large volumes of tax and other data to help identify high-priority work. |

3 |

1 |

These use cases involve reviewing data, such as submitted tax returns, to identify which ones may be at highest risk for noncompliance. According to IRS’s Chief Data and Analytics Officer, this type of use case overlaps in purpose with those in the AI inventory’s “Tax Compliance and Fraud Detection” category and could reasonably be labeled in either category. |

Source: GAO analysis of Internal Revenue Service’s (IRS) June 2025 AI inventory. | GAO‑26‑107522

aUse cases labeled as “in development” are those listed as being in the following AI life cycle stages in June 2025: initiation, acquisition and/or development, and implementation and assessment.

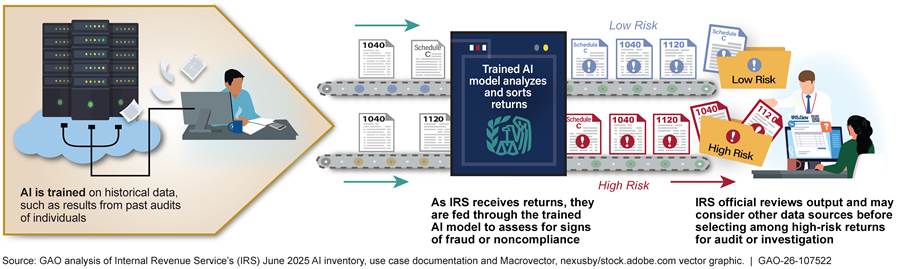

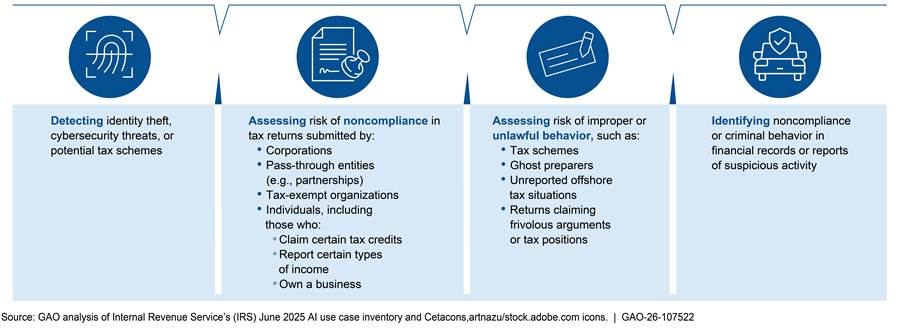

Tax compliance and fraud detection. IRS’s use cases aimed at addressing compliance issues and detecting fraud were often designed to analyze high volumes of data (e.g., tax returns or reports of potential fraud) and identify the riskiest cases or anomalies in the data. In most cases, the AI provides a risk score or recommendation for audit or investigation. Then, an IRS official reviews the output and decides whether to pursue the audit or investigation (see fig. 6).[36]

Figure 6: Example of How Some IRS AI Use Cases Focused on Tax Compliance and Fraud Detection Are Designed

IRS steadily increased its use of AI for tax compliance and fraud detection over time. Between 2017 and 2024, IRS initiated about six new use cases in this area per year. As of June 2025, 18 of IRS’s 43 tax compliance and fraud-focused use cases were operational.

These AI use cases aimed to identify specific types of fraud, potential noncompliance in different taxpayer segments, or criminal behavior, among other purposes. Figure 7 shows where most of these use cases focused, as of June 2025.

Taxpayer services. About half of IRS’s AI use cases aimed at improving taxpayer services as of June 2025 (13 of 24) were public-facing voice bots or chatbots. IRS’s June 2025 inventory included 11 voice bots and two chatbots that addressed taxpayer questions on different tax topics. According to IRS, there were over 5 million sessions with these bots in the 2024 filing season and nearly 25 million sessions in the 2025 filing season.

Other use cases focused on taxpayer services are generally designed to help IRS support taxpayers as they interact with the agency. For example, IRS has AI aimed to help simplify tax notices, analyze call transcripts between taxpayers and customer service agents, and verify the identities of taxpayers accessing IRS online accounts.

IRS began investing in AI for taxpayer services later than other AI, but most of these use cases were already in operation as of June 2025. The timing of these investments aligned with enactment of laws focused on enhancing taxpayer services (see fig. 8).[37]

Major Resource Reductions Created Skills Gaps That Could Affect IRS AI Efforts

Substantial Workforce Reductions and Efficiency Efforts Could Greatly Affect AI Plans

In 2025, IRS and Treasury began planning for increased use of AI. IRS officials in IT and the Research, Applied Analytics and Statistics unit (RAAS) said that the agency plans to use more AI in the future. For example, an IT official responsible for AI efforts in one of IRS’s new IT modernization initiatives said his team was pushing the use of many AI tools. He said he expected there to be a broad desire to leverage more AI for the 2026 tax filing season. In RAAS, officials said they continue exploring new ways of using AI.

Further, in July 2025, Treasury’s Deputy Secretary stated that Treasury is launching an AI Strategy that involves applying AI to improve the department’s operations. The Deputy Secretary said Treasury plans to launch pilot initiatives, implement governance structures, and engage with both public and private sectors to implement this strategy, among other things. In August 2025, Treasury’s Chief Information Officer (CIO) issued a memorandum directing all IT organizations to immediately integrate approved AI-driven solutions into processes, workflows, prototyping, and operations.[38]

We identified multiple challenges that could substantially affect IRS’s ability to achieve these plans, including major staffing reductions and other changes.

AI workforce reductions. IRS’s AI workforce substantially decreased between January and May 2025, including in business units heavily involved in AI efforts.

· RAAS. Officials said that staff departures and reassignment of staff to other workstreams will immediately and substantially affect RAAS’s ability to support the development and use of AI across the agency. RAAS officials said they lost 63 employees who supported AI efforts, either full time or as part of their set of duties—about 10 percent of RAAS’s January 2025 staffing level.[39] They said these employees retired, resigned under the Deferred Resignation Program, were terminated due to their status as probationary employees, or were placed on long-term administrative leave between January and May 2025.[40] IRS ultimately rehired some staff who had been let go due to their status as probationary employees, but officials said many of those employees and other AI-focused employees in RAAS have been reassigned from AI-focused work to other workstreams due to attrition elsewhere in RAAS.

A majority of the 63 employees RAAS lost were directly involved in designing, developing, or overseeing AI projects, according to officials. Officials said over a dozen supported IRS’s ability to research new AI approaches, including work on new analytics methods and AI tools and infrastructure (e.g., hardware needed for AI). IRS’s AI governance team also said it lost over three-quarters of its staff, including employees and contractor support that provided additional capacity for oversight activities, such as ongoing maintenance of IRS’s AI inventory.

Overall, these departures resulted in AI skills gaps that officials said could be hard to close.[41] For example, AI experts who officials said had significant AI education and prior experience were let go due to their status as probationary employees. Those employees supported the development and use of AI, including generative AI, across IRS.[42] According to IRS officials, as of September 2025, some of these employees were ultimately rehired while others found employment elsewhere. RAAS officials said, in general, skills gaps created by the departures of AI-focused employees will be hard to close because of the nature of the terminations, in-office work requirements, and individuals finding other employment.

In addition, IRS remains under an indefinite hiring freeze.[43] IRS is also subject to the government-wide requirement to hire no more than one employee for every four employees who depart.[44] RAAS officials said this prevents the agency from addressing these skills gaps.

· Chief Technology Officer AI team. IRS’s Chief Technology Officer (CTO) had a team focused on AI initiatives that could serve as proofs of concept for operational improvements across the agency, such as code generation tools, according to IRS’s Director of AI Innovation in IT. In January 2025, the team supported 11 use cases. IRS reported that, as of May 2025, only half of the staff on this team remained and two contracts providing staffing support on these efforts were not renewed. As a result, the CTO paused or suspended support of 10 out of the 11 use cases due to resource limitations.[45]

· Leadership involved in AI efforts. In addition, IRS officials reported that many senior officials in business units that are heavily involved in developing and deploying AI were either placed on administrative leave or resigned. For example, IT officials told us IRS’s Chief of AI in IT was placed on administrative leave in March 2025. In RAAS, officials said three directors of groups heavily involved with supporting the use of AI across IRS resigned as of May 2025. These departures resulted in the loss of expertise and institutional knowledge essential for understanding potential applications, benefits, and drawbacks to the use of AI in the IRS context, according to senior officials in both offices.

Other IRS workforce reductions. IRS officials explained how changes to IRS workforce levels throughout the agency had short- and long-term effects on IRS’s ability to use AI and update the AI inventory.

· Office of the Chief Procurement Officer. Office of the Chief Procurement Officer (Procurement) officials said the office lost over 40 percent of its staff between May and June 2025. About 80 percent of IRS’s use cases in June 2025 involved some degree of contracting.[46] Staff across the agency rely on Procurement officials to support their ability to acquire AI tools or contract out for AI support services. IRS Procurement officials said these departures could have immediate effects. For example, they said there could be significant delays in entering into new contracts and modifying or renewing existing ones, including contracts that support mission-critical programs.

RAAS officials said that Procurement staff departures had already resulted in loss of knowledge about IRS’s existing contracts. Between February and June 2025, officials said that Treasury and the Department of Government Efficiency sent repeated requests for officials to justify individual contracts. According to RAAS officials, these requests varied in terms of which contracts were being reviewed and types of questions asked. RAAS officials said answering some questions about these contracts was difficult because IRS procurement staff most familiar with technical contract details were no longer at the agency.

· Staff changes across the agency. In the short term, staff departures may pose challenges for updating the AI inventory. IRS officials said departures of staff who work on AI across the agency occurred on short notice. As a result, remaining staff could have a harder time understanding the status of ongoing efforts, such as whether individual use cases are active, paused, or cancelled. In addition, IRS may not be able to deploy some existing AI. For example, one RAAS official said the agency may no longer deploy an AI model focused on prioritizing tax returns for audit because the program may no longer have staff to conduct the audits.

In the long term, officials said that reductions in staff may lead to IRS not having sufficient data to develop or update and refine quality AI models. Specifically, they said decreases in enforcement staff will mean fewer audits are conducted. By conducting fewer audits, IRS will have less data on audit outcomes. As a result, IRS may not have sufficient data to reliably train new AI models or retrain existing models when needed, such as when there are new tax laws that may result in new compliance behavior. Without quality AI models, IRS officials said the agency may have diminished capability to improve IRS’s ability to collect revenue and reduce the number of audits that do not result in a tax change.

Procurement changes. In 2025, Treasury implemented new policies that changed procurement processes to comply with EOs related to cost efficiency.[47] IRS officials told us that these policies affect IRS’s ability to use AI. Specifically,

· Treasury introduced a policy requiring Secretary-level approval to enter into and execute all new contract awards, purchase orders, and any other obligation of funds, among other requirements.[48] RAAS and IT officials said this change introduced uncertainty about IRS’s ability to continue developing and deploying use cases. For example, officials said they had to justify a contract for licenses for a platform that provides underlying infrastructure for an IRS data warehouse and supports several AI use cases. They said the contract was only renewed for 6-month increments, and RAAS officials will have to justify the contract for each renewal.

· Treasury introduced a policy that promotes the use of commercial solutions and requires officials to provide written justification if they are seeking to acquire a noncommercial solution.[49] Treasury’s CIO told us he would like for IRS to use more commercial AI products that the General Services Administration certified have met certain information security requirements.[50] He said it would be more cost efficient for IRS to leverage these types of preapproved commercial tools than independently develop or contract for custom-developed AI models. RAAS officials stated that certain commercial tools can be sensible solutions for IRS when appropriate system and use controls are in place.

· Several contracts supporting AI use cases were terminated. For example, IRS cancelled the contract for one of IRS’s language translation use cases. Officials responsible for the use case said they were not consulted on this cancellation. Officials who used this tool said it had provided benefits over a different IRS AI use case for translation, including the ability to improve the glossary of tax terms the AI uses to complete translations. While they said the remaining language translation tool had some technical advantages, IRS IT officials had been working to incorporate those same features in the cancelled one.

These changes will affect the status of an unknown number of IRS’s AI use cases. For example, use cases may or may not remain deployed, development may or may not be able to continue, and deployment dates could be pushed out due to changes in staff capacity. The AI governance team requested comprehensive updates to the AI inventory in August 2025 after OMB published 2025 reporting requirements. OMB guidance directed agencies to submit their AI inventory to OMB in December 2025 and publicly release it in January 2026.[51] Those updates would reflect changes to the status of use cases such as discontinuations (e.g., due to contract cancellations or program changes).

IRS Has Not Assessed AI Skills Gaps nor Developed Plans to Close Them

IRS has not identified the skills the agency needs to support its use of AI nor developed a plan to address AI skills gaps, according to IRS’s Chief Data and Analytics Officer and a Human Capital Office (HCO) official responsible for workforce planning. IRS’s Internal Revenue Manual says business unit human resources liaisons are to work with workforce planning staff in HCO to support workforce planning activities, including efforts to identify and address skills gaps. We previously reported that identifying the critical skills and competencies needed to support agency efforts and developing strategies to address them are key steps in the workforce planning process.[52] Further, OMB’s 2025 memorandum on AI governance strongly encourages agencies to prioritize recruiting, developing, and retaining staff who have technical experience to support the use of AI.[53]

AI governance officials said there have been some informal conversations in RAAS about AI skills gaps resulting from staffing reductions in 2025. However, they said there have not been any formal conversations, such as with HCO or other business units, to identify staffing needs or develop a plan for addressing them. They said officials across the agency have instead been focused on adapting to significant organizational changes. Further, the HCO official responsible for workforce planning said Treasury and IRS need to finalize strategic plans before the agency can assess and plan to close any skills gaps.[54]

IRS’s Chief Data and Analytics Officer said the agency has short-term hiring plans, but they focus on customer support and audit staff, not staff with AI skills or expertise. HCO’s workforce planning official said the hiring plan includes some requests to hire computer engineers, computer scientists, and a data scientist, but none was specifically identified as contributing to AI efforts. One senior AI governance official noted that IRS previously had direct hire authority for data scientists, which helped IRS hire employees with AI skills. However, under IRS’s hiring freeze, the agency is unable to use its direct hire authority for data scientists.

While AI governance officials said they would like to hire for positions such as data scientists, engineers, and additional governance officials, they stated IRS must instead rely on training current staff. IRS’s Chief Data and Analytics Officer described several Treasury initiatives focused on training existing staff in AI skills, such as data literacy. In addition, an official said there has been increased demand for AI training opportunities alongside increased interest in using AI tools at the agency. Training existing staff could help address some, but not all, of IRS’s AI skills gaps.

Without staff to support AI capabilities and oversee individual use cases, IRS is at risk of initiating projects the agency does not have resources to support or deploying AI systems without resources to ensure they are used responsibly. Although not all workforce tools, such as hiring, are currently available, understanding the agency’s workforce in terms of AI skills and having a plan for staff with the skills to support the use of AI is foundational for IRS to reap its benefits and mitigate its risks.

IRS’s AI Inventory Did Not Always Contain Quality Information and Omitted Use Cases

IRS’s AI Governance Policies Include Inventory Requirements

Federal law and an executive order require agencies to prepare and maintain an inventory of current and planned AI use cases and to publicly report an inventory annually.[55] IRS’s AI governance policy and internal guidance, such as job aids and internal web pages, are available to help use case owners meet those requirements. Specifically, IRS’s March 2025 AI policy requires:

· Adding new use cases in IRS’s AI inventory. Use case owners are to submit new AI use cases to the inventory as soon as they are initiated. Initiation generally involves managers or executives in a business unit formally approving resources for an AI use case. IRS’s AI inventory generally contains information that OMB and Treasury require to be collected as part of agency AI inventories (e.g., life cycle stage), as well as other IRS-specific information (e.g., anticipated deployment date).

· Documenting key details such as model design and data sources. Use case owners are to submit additional documentation for their AI use case before putting the use case into operation. That documentation includes information such as the AI model type, the inputs and outputs of the model, the data used by the model, and whether the data contain any personally identifiable information.

· Coordinating with internal stakeholders at different points in the AI life cycle. As mentioned above, business units across IRS are responsible for initiating (i.e., approving resources for) new AI use cases that will support their business functions. As use case owners begin to develop their AI use cases, IRS policy requires that they work with stakeholders across the organization to follow any related policies, such as IRS’s privacy and IT security processes. Once developed, the business unit approves the AI use case to go into operation.

IRS’s February 2026 AI policy also reintroduced a process for internal subject-matter experts, including tax professionals, data scientists, and lawyers, to review use cases designated high-impact. Those use cases require certain risk management practices according to OMB guidance.[56] The 2026 policy states that this team of subject-matter experts serves as an independent reviewer to ensure the risk management practices are completed and meet IRS policy requirements.

· Updating use case entries regularly and when key changes occur. Use case teams are to validate or update their AI inventory entries and additional documentation at least annually or when directed by the AI governance team. IRS’s AI policy also states that use case teams are required to update this information when changes occur that affect the accuracy of the records, such as changes to the use case’s name, purpose, life cycle status, risks, or benefits.

In 2024, IRS created a team to help the agency navigate and meet federal requirements for AI governance. IRS’s Chief Data and Analytics Officer, who is also IRS’s Responsible AI Official, oversees the AI governance team. The team can support AI use case owners in navigating the governance process and complying with government-wide and IRS requirements. For example, the team has made internal educational presentations to officials across the agency to socialize AI requirements. In October 2024 and August 2025, the team also issued agencywide requests for officials to submit AI use cases to the inventory or update information as required by OMB.

The AI governance team also developed internal guidance aimed at ensuring the AI inventory contains accurate information. For example, the team

· Established a quality assurance checklist for inventory entries. The AI governance team developed a checklist it can use to review each entry in the AI inventory for accuracy. For example, the checklist directs the team to verify whether the entry includes sensitive information that should not be publicly released. Officials told us they work with use case owners as needed to improve their use case entries and related documentation.

· Created job aids for use case owners. The AI governance team developed an internal website that outlines the AI inventory requirements. They also developed a document to help use case owners understand the requirements for each field in the inventory, which is linked on IRS’s AI inventory submission form. For example, the job aid directs owners to name the use case so that the intended purpose is clear.

Information Quality in IRS’s AI Inventory Varied

IRS has taken steps to ensure its AI inventory contains quality information, such as having a policy requiring clear and detailed information necessary to understand the use case. However, we found 62 different entries in IRS’s June 2025 inventory had at least one quality issue. Ensuring IRS has quality information is a key principle of internal control.[57] Yet, the inventory did not always contain information that fully reflected either OMB guidance, IRS internal guidance, or leading practices. We found instances in which:

· Entries did not state expected benefits of the AI use case. We found that over 25 percent of use cases in IRS’s June 2025 inventory did not state how the use case was expected to benefit the agency. The job aid for use case owners says they are to state the expected benefit of the AI use case for a lay audience. OMB continues to require this information in its 2025 guidance for federal agencies’ AI inventories.[58]

· Entries omitted key information related to the status of the AI use case. For example, nearly 10 percent of use cases in IRS’s June 2025 AI inventory did not state the AI use case’s status, such as whether it is active or paused, or life cycle stage, such as in development or already in operation.[59] Two of those entries also did not state the date when the use cases became operational even though the use cases were in operation. The job aid for use case owners says all entries are to have these fields completed. OMB continues to require fields for life cycle stage and operational date in its 2025 guidance for federal agencies’ AI inventories.

· Entries did not list all business units involved with the AI use case. At least 10 percent of entries in IRS’s June 2025 AI inventory did not list all business units involved with the AI use case. For example, officials in RAAS and IT often help other business units develop AI use cases but were not always listed as one of the business units involved with the use case. The job aid for use case owners says they are to list the business units involved with both developing and using the AI when deployed. IRS continued to require use case owners to report this field in its AI inventory after updating the inventory to align with OMB’s 2025 AI inventory guidance.

· Entries that would have been more appropriately listed as two or more use cases. One AI use case originally developed to assist with selecting tax returns for audit for research purposes is now used for selecting risk-based audits for tax noncompliance in two other business units. All three of these use case scenarios were listed in one AI inventory entry. IRS officials disagreed that the application of this AI use case in other business units constituted separate use case scenarios, since all have a similar goal.

However, our AI Accountability Framework discusses the importance of assessing the appropriateness of expanding a use case beyond its original context.[60] Separating applications of AI based on their use in different business units and in programs that touch different subsets of taxpayers would enable IRS to more accurately report its use of AI in line with OMB guidance. This can include assessing when a use case goes into operation in the new context, any unique risks and benefits in the new context, and whether each particular use case scenario would be considered high-impact according to OMB guidance.

Another entry in the inventory included two developer productivity tools used in the same workflow: a tool to translate computer program code from one programming language to another and a chatbot for asking questions about the code. The AI use case owners confirmed these are two separate, independently operating tools. IRS’s AI governance team officials said these tools are used in the same “use case scenario” and therefore disagreed that these tools should be separate AI use case entries. They said listing these two tools as one use case does not conflict with the definition of a use case, which they said refers to a “scenario” in which AI is used and allows for multiple models to be used to achieve the objectives of the use case. While IRS’s definition of a use case allows for several models, it also says a use case is a specific business use of an AI technology.

These two AI tools may collectively contribute to a broader objective of developer productivity. However, the project team’s confirmation that each tool operates independently and serves a unique purpose—translating computer code and helping users understand code, respectively—suggests it would be more appropriate to list them separately. Our AI Accountability Framework states that agencies should evaluate the performance of AI by separating a system into components with clear differences in functionality.[61] Similar to the expanded use case, having separate entries for these separate tools would better enable IRS to weigh whether the benefits of each AI tool outweigh the risks.

We found two key factors contributed to the deficiencies in IRS’s AI inventory.

· Lack of comprehensive quality controls for information in the inventory. As of June 2025, IRS did not have a comprehensive quality assurance process for ensuring completeness and accuracy of entries in the AI inventory. IRS had some quality controls when there were imminent OMB reporting requirements. For example, in 2024, IRS took steps to ensure key fields in the AI inventory were complete.[62] This included life cycle stage, anticipated deployment date, and other information about the status of the AI use case. Officials said they took similar steps in 2025.

However, following the agencywide staffing reductions, in May 2025, AI governance officials said they allowed partial information in the inventory, in part, because of limited capacity. They also said they would rather have use case owners submit partial information about a use case than not enter their AI in the inventory at all. Officials said partial information—including entries with only the name, points of contact, and business unit for a use case—provides enough awareness of the AI to the AI governance team to comply with OMB requirements in a timely manner. Still, IRS’s Chief Data and Analytics Officer said that having stronger controls in place is a best practice.

IRS also previously introduced some quality assurance reviews in its AI governance process in 2024, such as reviewing each use case entry for quality. Yet, in early 2025, IRS suspended its quality assurance reviews because of reductions in staff for the AI governance team. They said these reviews can be time intensive because they require meeting with the AI use case owners to thoroughly understand the use case and ensure it is accurately reflected in the inventory. IRS officials said that they would like to implement additional quality assurance processes for the AI inventory, but they did not have resources to do so.

· Lack of comprehensive internal guidance on AI inventory entries. The quality assurance checklist that the AI governance team uses to review entries in the inventory is missing some key steps. The checklist includes some steps that would enable IRS to comply with AI inventory reporting requirements, such as to verify whether an inventory entry contains sensitive information that would need to be removed if made public. However, it does not include other steps, such as checks to ensure that each entry describes a single use case and all relevant business units are listed.

In addition, the job aid for use case owners does not state when, if at all, to fill out multiple inventory entries. For example, it does not state when separate entries may be necessary when expanding a use case to a new business unit or if a project uses multiple separate AI systems.

Implementing mechanisms to ensure each AI use case inventory entry contains quality information will improve IRS’s ability to oversee the inventory and comply with government-wide requirements in a compete, timely, and accurate manner. While it will take resources to review the large quantity of existing AI use case entries in the inventory, comprehensive quality controls—such as requiring key fields in the inventory to be completed when information is first submitted—could help AI governance officials prioritize reviews. Comprehensive internal guidance could also lead to higher-quality information in the inventory entries. With these steps, IRS can better ensure it has a high-quality inventory that reflects how IRS uses AI across the agency. IRS would also be positioned to assess the effects of any new Treasury or federal AI guidance on each AI use case and ensure those use cases comply with requirements, such as taking risk-management steps for use cases designated high-impact.

IRS’s AI Inventory Omitted Some Use Cases

During our audit, we identified examples of AI use cases that were not listed in IRS’s AI inventory. They fell into two sometimes overlapping categories.

· Contracted AI. In June 2025, we reported that IRS’s inventory did not include AI used by ID.me for verifying taxpayer identities, including their use of biometric identification technology.[63] We recommended that IRS ensure that any use of AI in contracts for identity proofing is included in IRS’s AI inventory and goes through IRS’s AI oversight process. IRS agreed with our recommendation. As a result, IRS started adding entries to its AI inventory for two ID.me use cases.

· AI in a law enforcement context. IRS’s Criminal Investigation unit has several AI-enabled tools, which officials said are also contracted. These tools help to gather online information to build criminal cases. As of June 2025, these tools were not included in IRS’s AI inventory.

IRS’s 2024 and 2025 AI policies clearly stated that all applications of AI at IRS must be reported in IRS’s internal AI inventory. In addition, federal law, executive order, and guidance require agencies to document generally all AI use cases, including those supported by or obtained through contracts, in their AI inventories.[64] OMB guidance specifies that AI governance requirements generally apply to AI used for law enforcement purposes with limited exceptions.[65] OMB guidance requires agencies to report any use case that is deemed to be high-impact in their AI inventories and lists several types of law enforcement AI use cases that are presumed to be high-impact.[66]

We found several factors contributed to this issue.

· Policy and communication gaps. Some IRS officials did not understand IRS’s guidance. IRS’s 2025 AI policy stated that in general all unclassified AI should be reported in the agency’s internal AI inventory. However, the policy did not explicitly state that contracted AI should be added to the AI inventory or that the internal inventory requirement applies to law enforcement use cases. In addition, neither agencywide requests for additional inventory entries nor presentations across the agency about AI governance requirements mentioned that the requirements apply to these types of AI. As a result, officials responsible for missing AI use case entries told us they were aware of IRS’s AI governance process but not that it applied to their contracted AI use cases.

Criminal Investigations officials said OMB’s 2024 AI inventory requirements were unclear about the inclusion of commercial AI tools. They said this is partly why the use cases were not included in the inventory. The officials said OMB’s 2025 AI inventory requirements were clearer and they planned to submit seven additional use cases to IRS’s AI inventory. Criminal Investigations officials also said they might have excluded use cases from the AI inventory because including them would have inappropriately revealed information to those outside of law enforcement.

Reporting all AI use cases in an internal inventory is a first step in IRS being able to ensure that all of its AI use cases—and in particular, any use case designated as high-impact—comply with government-wide AI oversight requirements and are reported to Treasury and OMB when necessary. IRS could have officials submit information about sensitive law enforcement use cases at a high level to the AI inventory to avoid inappropriately revealing sensitive information. In that case, IRS could then maintain a separate, more detailed AI inventory for sensitive law enforcement use cases.

While our report was at the agency for comment in February 2026, IRS published its updated AI governance policy. The new policy included more detail on requirements for use cases involving contracts and sensitive use cases. For example, it specifies when AI developed by third parties or AI services hosted by external service providers are subject to IRS’s AI policy. It also includes details on how to treat sensitive use cases, such as AI used for law enforcement purposes. The addition of this information in policy is a positive step in ensuring IRS has a complete inventory. Still, IRS will need to include this additional specificity in its future communications related to AI governance to ensure complete reporting.

· Steps not taken to identify additional existing contracts involving AI. As of May 2025, IRS Office of the Chief Procurement Office (Procurement) officials said they had not undertaken efforts to review all of the agency’s existing contracts to determine if those contracts involve the use of AI. While IRS continues to add some preexisting contracted AI use cases to the inventory, IRS could still be missing additional existing contracts that involve the use of AI because the agency still has not examined thousands of contracting documents, according to an IRS procurement official.[67] As a result, some IRS officials responsible for contracted AI may still be unaware of AI governance requirements for their contracts or use cases. In the case of IRS’s contract with ID.me, officials said they were not aware that ID.me was using AI as part of its services.

IRS has at least two ways to review existing contracts for potential uses of AI. One method is to perform keyword searches on contracting documents. IRS Procurement officials told us that keyword searches could have limitations because contract documents may not use the phrase “AI” or explicitly describe AI technologies. The officials explained that acquisitions focus on desired performance or outcomes, not the technology used to complete them. However, an official who pilot-tested keyword searches said this approach would be feasible, in part, because of improved technical capabilities implemented in the past year.

Officials noted they conducted searches using terms in OMB guidance. However, they did not coordinate with the AI governance team to identify any additional AI-related keywords and examine search results for the most likely potential AI use cases. Doing so could mitigate limitations related to the nature of reviewing contracting documents and provide more tailored results, thus providing IRS with quality information.[68]

IRS also has commercial software that should contain the information and capability for a comprehensive search of contracts. IRS began using the software in 2025 to facilitate contract management. IRS included the software in its June 2025 AI inventory, and the entry mentions that it has AI-enabled functionality for searching the full text of contract documents. IRS could explore using this functionality to identify possible uses of AI in other contracts. Despite the functionalities available to them, Procurement officials did not have plans to review existing contracts for AI use cases as of May 2025. Doing so is a first step in ensuring those responsible for AI contracts comply with AI governance requirements.

· Steps not yet taken to identify new contracts involving AI. As of May 2025, IRS Procurement officials said they had not yet taken steps to create a process for identifying which new contracts involve AI. For example, IRS had not updated Procurement’s internal acquisition checklist to highlight AI governance requirements. Procurement officials told us that they planned to update their internal guidance as part of efforts to meet new OMB requirements that went into effect in late 2025.[69] For example, OMB directed agencies to require, when appropriate, that vendors disclose the use of AI.

IRS officials told us that this and efforts to implement other new OMB requirements will help them close the information gap about vendor use of AI technologies. However, Procurement officials also told us that they were awaiting further guidance from Treasury before updating their policies. Agencies had until December 2025 to implement new acquisition policies. Because these requirements were not effective until later in 2025 and IRS had not received guidance from Treasury on implementing OMB requirements, we are not making recommendations in this area.

IRS officials cannot ensure AI is used responsibly and in alignment with other government-wide goals for AI if they are not aware of how employees and contractors use AI. Having a complete inventory that includes all of IRS’s AI use cases is an important first step in meeting government-wide AI requirements, such as conducting oversight of high-impact use cases. Further, a complete inventory will help ensure IRS is adhering to the evolving landscape of government-wide AI requirements. This is especially important in a resource constrained environment, where resources may need to be prioritized.

IRS’s Management of AI Could Benefit from a More Strategic Approach

Fragmented Oversight Risks Potential Inefficiencies in IRS’s AI Investments

IRS’s AI governance process has included several entities with oversight of individual AI use cases, but none were responsible for facilitating or engaging in a coordinated, strategic approach to managing AI investments across the agency.

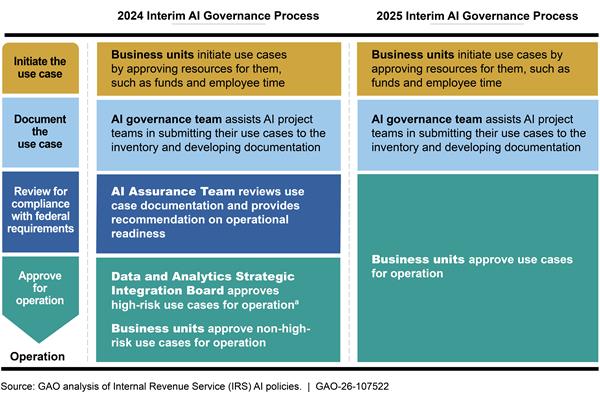

Previously, IRS’s 2024 AI governance policy required business units to send their use cases through a centralized review process. The level of centralized reviews varied based on risk level as defined by OMB and occurred after business units initiated the use cases through their own processes.[70] These reviews did not include checking against the AI inventory for similar use cases.

In March 2025, IRS revised its AI policy to suspend these centralized reviews in response to changing federal guidance.[71] This change further fragmented IRS’s approach to AI governance, placing oversight responsibilities on each business unit using AI. See appendix III for more information on IRS’s 2024 and 2025 AI governance processes.

Under the fragmented approaches of the 2024 and 2025 AI policies, IRS had several use cases initiated by different business units that had similar goals and engage in similar activities.[72] For example, our review of IRS’s inventory found:

· AI for digitization. IRS’s June 2025 AI inventory had at least six AI use cases that engage in similar activities for extracting data from tax forms submitted on paper.[73] These use cases were acquired from different vendors and span five different business units. In May 2025, Treasury’s Chief Information Officer (CIO) told us that IRS had paused its IT modernization projects, including some related to digitization, and was reassessing these efforts. In August 2025, IRS officials told us that two of the six digitization use cases had been retired as a result.

· AI for legacy code modernization. IRS’s June 2025 AI inventory contained two similar use cases for assisting IT officials with modernizing legacy computer code. These use cases were acquired from different vendors. In May 2025, Treasury’s CIO told us that IRS had paused and was reassessing these efforts. He also said that he would like for IRS to use more commercial AI products as part of its IT modernization efforts.[74] He said this includes one new AI tool to assist with modernizing legacy code. However, according to the Treasury CIO, he had not reached out to coordinate with the IRS AI governance team to consult on these new efforts, such as to identify if there were similar existing efforts at IRS. In September 2025, IRS officials told us that both of these use cases have been retired.

· AI that identifies potential issues for audit. IRS’s AI inventory contains two AI use cases overseen by different business units that engage in similar activities for analyzing the same tax form. Both use cases assist officials with identifying potential issues for audit. IRS’s Small Business/Self-Employed unit initiated their use case before IRS’s AI inventory existed. Officials responsible for the use case said reviewing the inventory would have enabled them to consider if there was an existing use case to build upon rather than building something from scratch. They said they may have avoided spending the time and resources to develop their newer use case if they had known sooner about the use case developed by the other business unit.

OMB guidance requires agencies to maximize the value of existing investments to ensure speedy deployment and to protect taxpayer dollars from duplicative spending, including sharing resources within an agency.[75] We have previously reported that improving coordination and collaboration across and within an agency can help reduce or better manage potential overlap and duplication.[76] Our leading practices outline various approaches that could promote better coordination and collaboration among IRS entities responsible for AI use cases. For example, to the extent permissible by OMB and Treasury guidance,[77] IRS could:

· Designate a single central entity with oversight responsibility. As previously described, IRS’s AI governance approach became more decentralized in its 2025 policy, further limiting coordination and collaboration among the entities responsible for AI oversight.[78] According to our leading practices for collaboration, designating a single leader could help IRS centralize accountability and speed decision-making.[79] IRS could task a single entity, such as one of the entities previously tasked with centralized reviews, with responsibility for coordinating regular assessments of new AI use cases for unwarranted overlap or duplication.

Alternatively, the AI governance team could be well positioned to take this role, given its role in managing the AI inventory. As the officials managing IRS’s inventory, they have visibility and knowledge of every AI use case at the agency. In addition, the AI governance team has an informal process to help assess potential new AI use cases. Specifically, the team uses an internal website, the Enterprise AI Front Door, to coordinate with business unit staff interested in using AI, assess ideas for new AI use cases, and prevent duplicate work by assessing if new ideas could leverage existing resources.

AI governance officials told us that resource constraints, including significant reductions in staff, make it challenging to keep pace with submissions to the Enterprise AI Front Door and take on any additional oversight roles. Nevertheless, the AI governance team may still be best positioned to identify potential opportunities for business units to leverage existing resources early in the AI life cycle.

· Clarify the business units’ roles and responsibilities for initiating AI use cases. According to AI governance officials, the process for initiating new AI use cases may differ between business units. Business unit officials can voluntarily review IRS’s AI inventory for opportunities to coordinate and collaborate with other business units on similar efforts but doing so is not required in IRS’s AI policy.

According to our leading practices for collaboration, clarifying roles and responsibilities in policy could help standardize decision-making and increase communication between business units.[80] IRS could clarify business units’ roles and responsibilities when initiating a new AI use case and specify that the initiation process should involve assessment for potential overlap or duplication with existing use cases. An assessment during the initiation process would ensure IRS is leveraging resources it has already committed prior to investing significant resources in a new use case.

According to AI governance officials, IRS’s oversight approach has always prioritized innovation and speed in meeting business units’ needs. In February 2026, IRS published an updated AI governance policy which generally continued the decentralized approach of the 2025 policy but reinstated centralized reviews over certain aspects of high-impact use cases. However, it did not include a process to improve coordination to identify potential overlap or duplication.

A more coordinated, strategic approach can help IRS better manage its fragmented approach to AI governance. Specifically, it could help ensure staff exploring a new use case identify opportunities to leverage existing resources or lessons learned. For example, business units may be able to leverage existing contracts or models developed in house for other business needs.

Either of these approaches can help IRS improve its ability to strategically manage AI investments across the agency and reduce the risk of unwarranted overlap or duplication in future AI use cases. In particular, increasing coordination and collaboration among IRS business units can help IRS maximize the value of existing AI investments while protecting taxpayer dollars from duplicative spending, in accordance with OMB guidance. While additional coordination and collaboration would require more work at a time when IRS is resource constrained, such efforts early in the AI governance process could ultimately save IRS time and money.

IRS Does Not Track How Individual AI Use Cases Contribute to Strategic Goals

IRS does not have a process to ensure its AI investments are contributing to agency goals. In April 2023, IRS published a strategic plan that outlined agencywide goals and guided its investments under the Inflation Reduction Act.[81] However, IRS does not track how its AI use cases align with these strategic goals and does not collect performance metrics on AI use case contributions to meeting the goals.

· Alignment to strategic goals. IRS does not require use case owners to report on how their AI use cases align to IRS’s overall strategic goals. IRS collects a variety of information from individual use case owners as part of its AI inventory process. For example, use case owners are asked to describe what the AI use case intends to achieve and to select an “IRS category” (i.e., whether it contributes to operational efficiency, tax compliance and fraud detection, or taxpayer services). AI governance officials said the “IRS category” field was sufficient to show the prevalence of AI in the major mission areas at IRS. They also explained that a use case’s connection to the strategic goals may be unclear because AI may be one component of a larger project.

However, officials said use case owners should be able to identify how their AI connects to the agency’s goals. IRS officials told us that going forward they were considering adding a field in the AI inventory about alignment to strategic goals. IRS officials explained that the updated Treasury and IRS strategic goals must be established before adding this field to the inventory. In April 2025, Treasury internally shared the strategic goals and priorities for its draft strategic plan for fiscal years 2026-2030. According to IRS officials, some offices in IRS have started taking steps to align their efforts to these new goals.

· AI performance metrics. IRS has not established AI-specific success measures it could use to track how well individual use cases help achieve IRS’s strategic goals or compare the performance of similar use cases. By collecting data, such as the amount of staff time saved by replacing a manual process with AI, IRS could better understand how its AI initiatives are improving operational efficiency throughout the agency. As part of the 2024 and 2025 AI governance process, IRS required AI use case owners to report some performance metrics for each use case. However, those metrics were about the technical performance of the AI model and not the extent to which the use case contributed to agency strategic goals.

IRS officials noted that OMB guidance requires agencies to use specific metrics or qualitative analysis to assess the effects of its AI. However, that requirement only applies to AI use cases the agency designates as high-impact.[82] In addition, IRS officials explained that AI not designated high-impact might also have notable programmatic effects, such as improved productivity or improvements to taxpayer assistance. Understanding the extent of this effect for all use cases, regardless of whether a use case falls under OMB’s high-impact designation, is an important step in IRS strategically managing its entire AI inventory.

OMB guidance states that an agency’s strategic goals and objectives should be used to align resources, such as AI investments, and guide decision-making to accomplish priorities.[83] It also recommends that agencies build a portfolio of evidence, such as performance measurement, to support this decision-making.[84] While this guidance is directed at Treasury, we have previously reported that agency components, such as IRS, can use federal guidance as leading practices for their own planning.[85] We have also reported that key practices such as defining goals and collecting evidence can help agencies effectively manage and assess the results of their efforts.[86]