Report to Congressional Requesters

United States Government Accountability Office

A report to congressional requesters

For more information, contact: William Russell at RussellW@gao.gov or Candice N. Wright at WrightC@gao.gov.

What GAO Found

Federal agencies reportedly more than doubled their use of artificial intelligence (AI) from 2023 to 2024, and they used a range of approaches to acquire additional AI capabilities through fiscal year 2025. GAO identified trade-offs facing agencies as they acquire AI, and some associated challenges and benefits. For example:

Agency-directed vs. vendor-driven approaches. Some agencies awarded new contracts in pursuit of AI solutions. In other instances, industry introduced capabilities to agencies in the absence of specific AI requirements.

Contracts vs. other agreements. Agencies used different types of contracts to acquire AI capabilities. In some instances, agencies also leveraged other agreements not governed by federal acquisition regulations to develop more advanced AI capabilities.

AI as a service vs. a product. Some agencies bought AI as a product, such as software. However, agency officials told GAO they acquire AI as a service where the vendor provides AI capabilities and outputs on an ongoing basis.

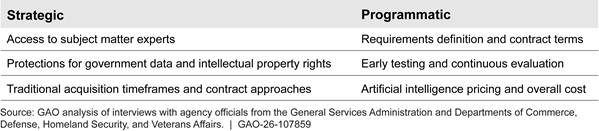

GAO identified several strategic and programmatic challenges agencies faced when acquiring AI capabilities.

In April 2025, the Office of Management and Budget (OMB) issued guidance to help agencies acquire AI responsibly. OMB directed agencies to update their AI policies to comply with OMB’s requirements. GAO previously reported that agency-level implementation is critical to achieving acquisition goals directed by OMB (GAO-25-107398).

In this review, GAO found the selected agencies were not yet systematically collecting lessons learned from AI acquisitions—a necessary first step to share knowledge about AI acquisitions in accordance with OMB guidance. OMB has stated that agencies should share knowledge about AI acquisitions through a web-based repository developed by the General Services Administration (GSA). However, officials at four agencies—GSA and the Departments of Defense (DOD), Homeland Security (DHS), and Veterans Affairs (VA)—told GAO they were not prepared to do so because their agency policies did not require them to collect lessons learned. As a result, the agencies are missing opportunities to identify and apply best practices—such as contract terms related to data rights or testing requirements—or to avoid mistakes as agencies increasingly acquire AI.

Why GAO Did This Study

Industry leads AI development, reportedly investing over $250 billion in 2024 alone. Federal agencies are finding many opportunities to use AI to execute their missions. They already use AI for veteran services, enhancements to weapon systems, and administrative tasks. To realize the benefits of AI, federal agencies often contract with companies to acquire solutions. Members of Congress and others have raised concerns about federal AI acquisitions. These concerns include long-standing acquisition issues, such as fostering competition, as well as issues specific to AI, such as training AI models on flawed data.

GAO was asked to review federal AI acquisitions. This report addresses (1) acquisition approaches agencies are using to adopt AI, (2) types of challenges agencies face when acquiring AI capabilities, and (3) the extent to which selected agencies are prepared to share knowledge related to acquiring AI solutions.

GAO conducted in-depth reviews of 13 AI acquisitions at four federal agencies—DOD, DHS, GSA, and VA. GAO selected these agencies based on maturity of AI acquisition efforts and approaches to acquiring AI capabilities, among other factors. GAO reviewed the agencies’ relevant policies, and interviewed senior AI acquisition leaders at the selected agencies. GAO also analyzed OMB guidance.

What GAO Recommends

GAO is making a total of four recommendations. Specifically, GAO is recommending that DOD, DHS, GSA, and VA update their policies to require officials to systematically collect lessons learned from AI acquisitions to enable sharing and application by other agencies. The agencies concurred with all of the recommendations.

|

Abbreviations |

|

|

|

|

|

AI |

artificial intelligence |

|

DOD |

Department of Defense |

|

DHS |

Department of Homeland Security |

|

EO FAR |

executive order Federal Acquisition Regulation |

|

FEMA |

Federal Emergency Management Agency |

|

GSA |

General Services Administration |

|

IDIQ |

indefinite delivery/indefinite quantity |

|

IP |

intellectual property |

|

NGA |

National Geospatial-Intelligence Agency |

|

NIST |

National Institute of Standards and Technology |

|

OMB |

Office of Management and Budget |

|

OTA |

other transaction agreement |

|

VA |

Department of Veterans Affairs |

|

|

|

This is a work of the U.S. government and is not subject to copyright protection in the United States. The published product may be reproduced and distributed in its entirety without further permission from GAO. However, because this work may contain copyrighted images or other material, permission from the copyright holder may be necessary if you wish to reproduce this material separately.

April 13, 2026

The Honorable Gary C. Peters

Ranking Member

Committee on Homeland Security and Governmental Affairs

United States Senate

The Honorable Thom Tillis

Chairman

Subcommittee on Intellectual Property

Committee on the Judiciary

United States Senate

Federal agencies are finding many opportunities to use artificial intelligence (AI) to improve how they execute their missions. They are already using AI to try to improve the services provided to veterans, enhance the effectiveness of weapon systems, and increase the efficiency of administrative activities, to name a few examples. To this end, agencies are engaging the private sector to acquire an array of AI solutions, including models, the infrastructure necessary for those models, and the systems that use AI model outputs.[1]

While often supportive of the promise AI offers, members of Congress and others have raised concerns about the federal government’s acquisition of AI solutions, particularly in the current landscape where AI capabilities, markets, and federal policy are rapidly evolving. Some of these concerns involve long-standing acquisition challenges, such as monitoring performance, obtaining necessary data rights, and increasing opportunities for competition. Other concerns are unique to acquiring AI solutions, including concerns that vendors may be training their models on flawed data, and that model performance may degrade over time, leading to unreliable outputs. To help agencies improve how they are acquiring AI capabilities, in April 2025, the Office of Management and Budget (OMB) issued Memorandum M-25-22, Driving Efficient Acquisition of Artificial Intelligence in Government.[2]

You asked us to review federal AI acquisitions. This report addresses (1) acquisition approaches agencies are using to adopt AI, (2) types of challenges that agencies face when acquiring AI capabilities, and (3) the extent to which selected agencies are prepared to share knowledge related to acquiring AI solutions.

To identify acquisition approaches agencies are using to adopt AI capabilities, we reviewed AI activities at the Departments of Commerce (Commerce), Defense (DOD), Homeland Security (DHS), and Veterans Affairs (VA); and the General Services Administration (GSA). Our review of Commerce’s activities focused primarily on government-wide initiatives led by the National Institute of Standards and Technology (NIST) and senior acquisition leaders, rather than specific AI solutions. We selected these agencies to account for relatively mature AI acquisition efforts (DHS and DOD), a large number of “high-impact” AI solutions (DHS and VA), and AI acquisition responsibilities spanning the federal government (GSA and Commerce).[3] We reviewed the agencies’ AI policies and interviewed senior acquisition leadership to understand the agencies’ AI acquisition processes.

For our purposes, we considered agencies’ AI solutions to be acquisitions based on agencies’ use of contracts—which we reviewed—to pay for AI products, services, license agreements, development support, or testing. We selected a nongeneralizable sample of 13 AI acquisitions from DOD, DHS, GSA, and VA for in-depth review to cover a range of factors, including: a) impact on citizens (e.g., affecting agency decisions about allocation of benefits); b) different types of AI capabilities, such as machine learning or computer vision; c) how the AI solution was acquired, i.e., commercially available or custom development; d) different acquisition phases (starting with developmental contract awards through the end of the program life cycle); e) different contract types, such as firm-fixed price; and f) “high-impact” AI solutions. We selected solutions to achieve a mix of examples that account for variation across these factors.

To select the nongeneralizable sample of AI acquisitions, we used a multifaceted approach. For civilian agencies, we reviewed publicly available AI use case inventories found on each agency’s website, as well as OMB’s consolidated AI use case inventory from December 2024, which was the most complete data at the start of our review. We also reviewed news sources for AI use cases that matched our selection factors. We then coordinated with knowledgeable agency officials to determine if potential use cases we identified in the public inventories and news sources were correctly categorized as having an affiliated contract. In one case, agency leadership suggested a recent AI use case that was not in public inventory. Based on these steps, we selected four use cases with associated contracts from DHS, two from GSA, and three from VA. To identify potential DOD use cases, we coordinated with officials from the Office of the Under Secretary of Defense for Acquisition and Sustainment and the Chief Digital and Artificial Intelligence Office to review a classified list of over 200 AI-related activities at DOD.[4] Using our selection factors outlined above, we selected four solutions with associated contracts or agreements to include in our in-depth review.

From our sample of AI acquisitions, we analyzed 44 contracts and agreements awarded between September 2018 and February 2025 and supporting program documentation. In addition, we interviewed officials directly supporting the sample of AI acquisitions to understand the contract mechanisms and acquisition approaches they used to acquire AI solutions. For a full list of the AI acquisitions we reviewed in-depth, see appendix I. We also reviewed highly publicized AI acquisition approaches announced in fiscal year 2025 to a lesser extent.

To determine the types of challenges that agencies face when acquiring AI solutions, we first interviewed officials at our five selected agencies, including agency leadership and staff supporting our sample of AI acquisitions. Among the challenges agency officials shared with us, we selected those identified by the majority of our five selected agencies and then grouped the most commonly cited issues into six challenge areas. We then verified that these challenges were issues across all five of our selected agencies. We reviewed OMB’s government-wide AI acquisition guidance, M-25-22, to identify steps in the guidance that corresponded to the challenges raised by officials at our five selected agencies. Specifically, we compared the required and recommended actions for agencies in that policy to the challenges identified through interviews with senior acquisition leadership from our selected agencies and officials from our nongeneralizable sample of AI acquisitions.

To assess the extent to which selected agencies are prepared to share knowledge about AI acquisitions as directed by the recent OMB guidance, we assessed requirements in M-25-22 and interviewed senior acquisition leaders and officials from our nongeneralizable sample about how, if at all, they documented lessons learned. We also conducted targeted reviews of relevant departmental or agency-specific AI policies to corroborate certain statements from these officials.

We conducted this performance audit from October 2024 to April 2026 in accordance with generally accepted government auditing standards. Those standards require that we plan and perform the audit to obtain sufficient, appropriate evidence to provide a reasonable basis for our findings and conclusions based on our audit objectives. We believe that the evidence obtained provides a reasonable basis for our findings based on our audit objectives.

Background

The term AI applies to a broad array of algorithms and techniques that enable computer systems to make predictions, learn concepts or tasks, and solve complex problems in a manner that mimics human intelligence.[5] Recently, industry-driven AI advances have rapidly enabled a wide variety of new capabilities with real-world applications that can support federal agencies’ missions. To help agencies realize the benefits of these recent advances, Congress and the Executive Branch have taken steps to improve federal AI acquisitions, including establishing guidance to help agencies better acquire AI.

Industry-led Evolution of AI Technologies

Key AI concepts, such as computer programming and algorithms, date back to well beyond the 1950s when modern computing took hold. More recently, AI capabilities and their respective markets have evolved at a rapid pace. Industry has spent billions of dollars to support the development and refinement of AI models over the last decade. The Stanford University Institute for Human-Centered AI reported that the private sector leads AI development, with nearly 90 percent of notable models—those deemed as particularly influential models within the AI ecosystem—originating from industry in 2024 compared to 60 percent in 2023.[6] Those researchers also found that corporate AI investment reached over $250 billion in 2024 alone. They also found that, over the past decade, the AI sector has experienced dramatic expansion, with total investment growing more than thirteenfold since 2014. As a result, the sophistication of AI models has shifted from conventional AI systems that classify or predict to generative and frontier models capable of enabling a wide variety of real-world applications. For example, AI capabilities underpin online tax preparation services and facial recognition systems. More advanced AI systems, such as generative AI models, can create multimodal content, such as text, images, audio, and video.

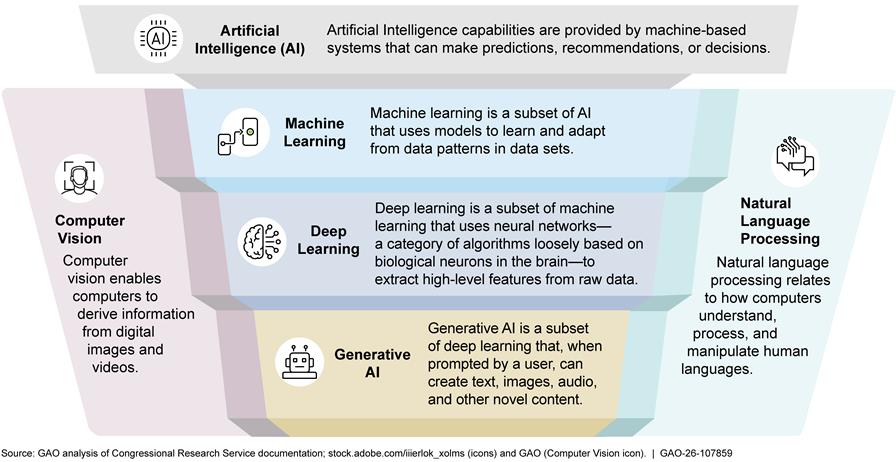

Types of AI Capabilities

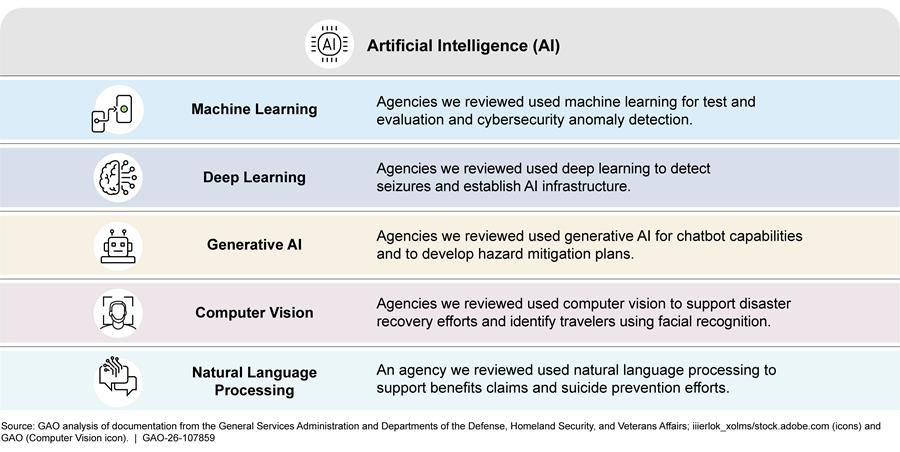

Within the field of AI, there are various capability types such as machine learning and deep learning that introduce more complex types of models. In addition, there are specific capabilities that overlap these fields. For example, computer vision enables computers to derive information from digital images and videos, whereas natural language processing enables computers to understand, manipulate, and process human language. Figure 1 provides more details about the capabilities that we reviewed in our sample of AI acquisitions.

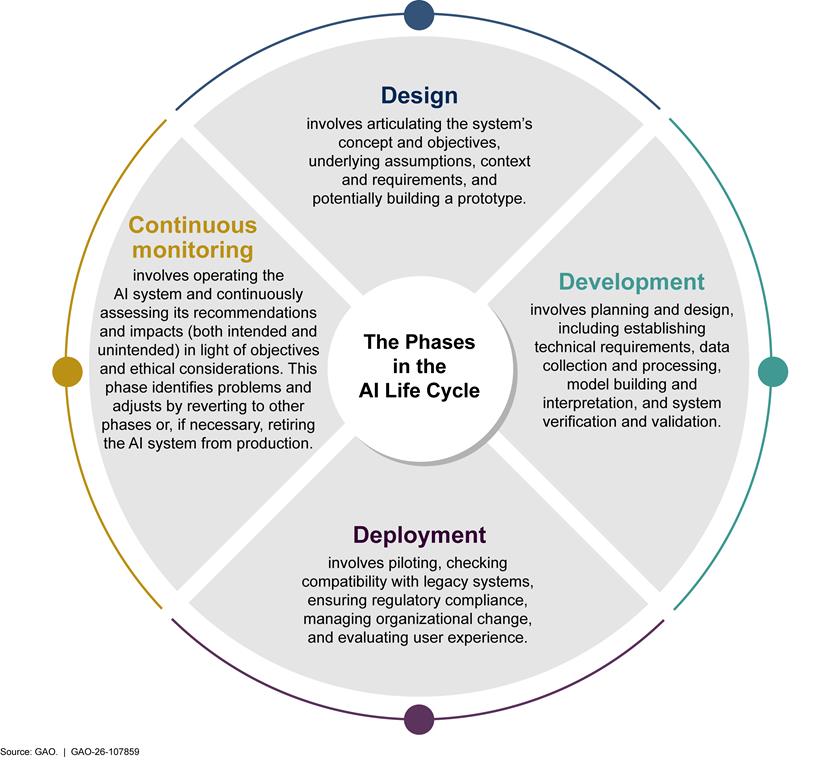

AI Development Life Cycle

The development life cycle of an AI system can be categorized into four phases that account for continuous learning: design, development, deployment, and continuous monitoring. These phases are often iterative and not necessarily sequential (see fig. 2).

Data and Computing Needs for AI

We have previously reported on the role that data play in developing AI capabilities, as machine learning systems begin with data—generally in large amounts—and infer rules or decision procedures that aim to predict specified outcomes.[7] High quality data are critical for training, testing, and validating AI systems before and after deployment to ensure AI systems produce consistent and accurate results.[8] AI systems based on machine learning models learn from data, known as training sets, in order to perform a task. A testing set is a subset of the data used to test the trained model. The testing set should be large enough to yield statistically relevant results and be representative of the data set as a whole.

Computing requirements also play a key role in developing AI capabilities. To process vast volumes of data, AI systems often require considerable computing power and new architecture, often cloud-based, to support model training and development. Stanford University researchers found that the training computing power (also known as compute) for notable AI models doubles approximately every 5 months.[9] New academic research shows that large-scale industry investment drives these model scaling and performance gains. We have reported that major AI breakthroughs that have occurred in the past decade in computing power and data availability were needed to develop advanced AI models.[10]

Federal Contracting

Federal agencies rely on the private sector to deliver goods and services that help agencies perform their missions, typically using contracts to acquire software, cutting edge technologies, and commercial products and services. AI can fall into all three of these categories.

The federal acquisition life cycle can be categorized into three general contracting phases: pre-award, award, and post-award.

· Pre-award phase activities often include defining requirements, acquisition planning, and preparing the solicitation.

· Award phase activities often involve the evaluation of offers, price negotiations and discussions with offerors, and the selection of awardees.

· Post-award phase activities often involve agency oversight of contractor performance—which includes continuous monitoring—and contract administration and closeout.

Agencies typically follow the Federal Acquisition Regulation (FAR) and agency supplements to award contracts.[11] The FAR provides agencies with a broad range of contract types and vehicles to acquire products and services. These include leveraging established government-wide or agency-wide multiple-award indefinite delivery/ indefinite quantity (IDIQ) contracts or developing custom solutions by entering into a contract with one vendor using a variety of contract types, such as fixed-price, cost-reimbursement, or single award IDIQ. The FAR also states that agencies may acquire commercial products or commercial services—which generally refer to qualifying products and services that are sold or made available to the general public—using streamlined procedures.[12] AI solutions may be commercial, and these procedures can help agencies acquire them faster.[13]

Established government-wide or agency-wide multiple-award contracts. Agencies can use the Federal Supply Schedule program, also known as the Multiple Award Schedule program, which is directed and managed by GSA and provides federal agencies with a simplified process for obtaining commercial supplies and services at prices associated with volume buying.[14] Schedules are catalogs of related products and services from preapproved vendors with established pricing that can be used by federal agencies to obtain goods and services, ranging from office furniture to medical equipment and supplies.[15] When leveraging the Federal Supply Schedule, agencies can place orders using IDIQ contracts awarded by GSA.[16]

Agencies can also place orders using existing agency-wide vehicles such as blanket purchase agreements or multiple award IDIQ contracts. Blanket purchase agreements are agreements (not contracts) between government agencies and qualified vendors. They contain pre-negotiated terms and conditions, including prices, in place for future purchases and are a simplified method of fulfilling repetitive needs for supplies and services.[17] IDIQ contracts can be used when the exact quantities and timing for products and services is not known at the time of award.[18] Contracting officers are required, to the maximum extent practicable, to give preference to making multiple awards off IDIQ contracts under a single solicitation for the same or similar supplies or services to two or more sources.[19] These contracts may be available for use within an agency or across multiple agencies.

Custom solutions. When existing contracts will not meet an agency’s needs—for example, if commercial products or commercial services are not adequate to meet an agency’s requirements—an agency may award a new contract. The exact type of contract awarded will vary depending on factors such as the degree to which the requirements have been defined. For example, a firm-fixed price contract may be used to acquire supplies or services when there are reasonably definite requirements allowing the contracting officer to establish fair and reasonable prices at the outset. If the requirements are less defined, preventing the negotiation of exact costs to be estimated with sufficient accuracy to use a fixed-price contract, a cost-reimbursement contract may be used, subject to certain limitations.[20]

Intellectual property (IP). In addition to providing contracting mechanisms, the FAR also implements laws and provides the basic regulatory framework governing how agencies may license and acquire contractor IP.[21] In general, using another entity’s IP requires permission, and the government typically uses licenses to obtain permission and define the scope of its rights to use a particular contractor’s IP. The federal government may also obtain data rights when the development of IP is funded by the government—in whole or in part— and the types of data rights obtained by the government can depend on how the IP is developed and funded.[22]

Recent executive orders. In March and April 2025, the President issued executive orders that directed agencies to take steps to consolidate procurement in GSA and directed agencies to procure commercially available products and services to the maximum extent practicable.[23] Subsequently, OMB issued a memorandum stating that OMB and GSA would work closely with agencies to significantly increase consolidation efforts, noting that certain types of requirements are highly suitable for consolidation.[24] The Federal Acquisition Regulation Council also made changes to the FAR that generally require agencies to purchase commercial products and services when possible and to use the Federal Supply Schedules and government-wide contracts under certain circumstances.[25]

In April 2025, the President issued an executive order directing OMB’s Office of Federal Public Procurement Policy to coordinate with the Federal Acquisition Regulatory Council and appropriate agency leaders to overhaul the FAR.[26] These officials are directed to ensure that the FAR contains only provisions that are required by statute or that are otherwise necessary to support simplicity and usability, strengthen the efficacy of the procurement system, or protect economic or national security interests.[27] The FAR Council has issued guidance with new text by FAR part and agencies are generally expected to implement these changes by updating their own guidance. The FAR Council will later amend the FAR using formal rulemaking procedures.[28]

Other transaction agreements. Congress also provided certain agencies with the authority to enter into other transaction agreements (OTA), which are generally not subject to the FAR. For example, DOD is able to enter into OTAs for research, prototyping, and follow-on production of new technologies or products, subject to certain conditions.[29] OTAs may be used to enable agencies to structure agreements that may leverage commercial business practices and remove barriers to entry to encourage non-traditional contractors to do business with the government.[30]

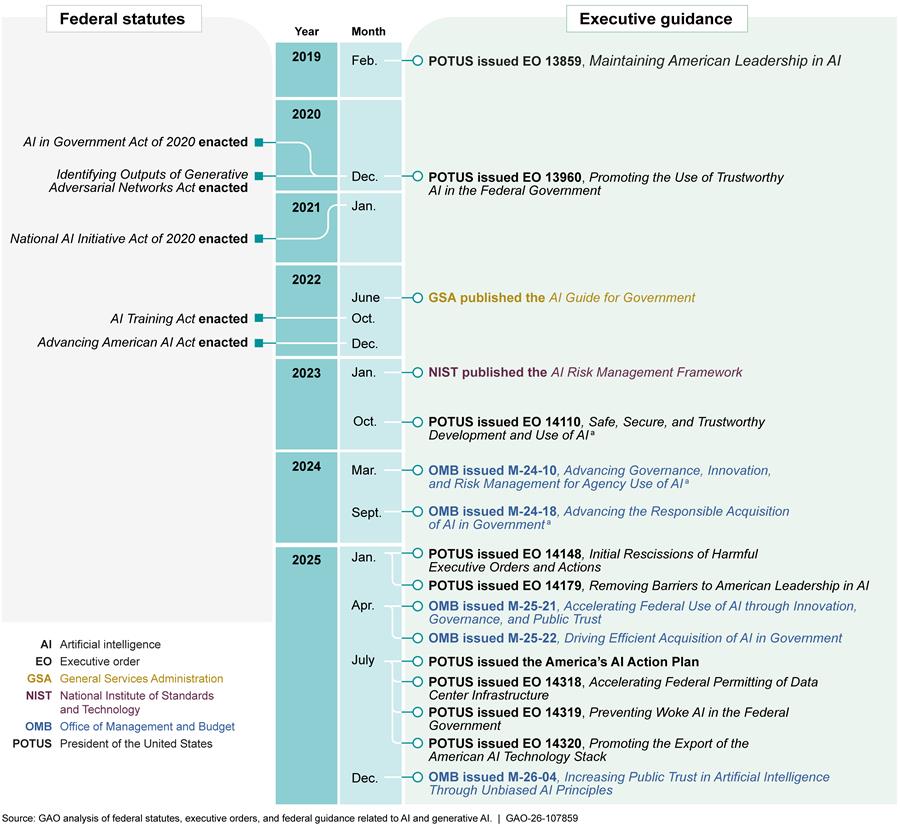

Federal Guidance on Agency Adoption of AI

Congress and the Executive Branch have taken several steps to improve federal AI adoption since 2019. Key legislative efforts have included requirements for certain agencies to develop AI use case inventories. Additionally, executive orders and OMB memorandums have provided guidance on federal government use of AI and ensuring responsible development and acquisition of AI capabilities, while GSA and NIST guidance provide federal agencies with information on building, investing in, and evaluating AI capabilities (see fig. 3).

aEO 14148, issued in January 2025, rescinded EO 14110 and OMB memorandums M-24-10 and M-24-18.

In April 2025, OMB issued M-25-22, which provided government-wide guidance for AI acquisitions. OMB M-25-22 focuses on three overarching themes to help agencies responsibly acquire AI: ensure a competitive AI marketplace; track AI performance and manage risk; and promote cross-functional engagement. Within those areas, OMB identifies required or recommended actions for agencies across the acquisition life cycle (see table 1).

|

Acquisition phase |

OMB Guidance for Acquiring Artificial Intelligence (AI) |

|

Pre-award |

Agencies should: · Convene cross-functional teams to inform the procurement of AI systems in a streamlined manner · Conduct thorough market research when planning an acquisition · Include provisions in solicitations to encourage AI proposals that offer knowledge transfer, data and model portability, clear licensing terms, and pricing transparency, and · Consider using performance-based acquisition techniques, such as statements of objectives, performance work statements, quality assurance surveillance plans, and contract incentives |

|

Award |

Agencies should: · Include contract terms that address use of government data, intellectual property rights, privacy, and vendor lock-in, among other requirements Agencies are encouraged to: · Test proposed AI solutions to understand the capabilities and limitations before awarding contracts and throughout the acquisition lifecycle |

|

Post-award |

Agencies should: · Receive an authorization to operate AI systems and services from an appropriate official prior to deployment · Perform effective system oversight consistent with contract terms, and · Work with vendors to implement contractual terms related to rights and data after deciding not to extend contracts |

Source: Office of Management and Budget (OMB) Memorandum M-25-22. | GAO‑26‑107859

Prior GAO Reports on AI

We previously reported on government-wide AI use case inventories and how federal agencies, including DOD and DHS, use AI for national security purposes.[31] We also reported on specific types of AI, such as generative AI technologies, as well as related information technology developments, including cloud infrastructure and high-risk emerging technologies for cybersecurity.[32] We also assessed how AI affects safety and the environment, and published an AI Accountability Framework to help federal managers ensure accountability and responsible use of AI.[33]

As AI Technology Rapidly Evolves, Agencies Are Using a Wide Range of Acquisition Approaches

Agencies are increasingly adopting rapidly evolving AI capabilities to support their missions. For example, DOD is acquiring computer vision capabilities for military reconnaissance units, and VA is acquiring deep learning models to support veterans’ health care. Agency officials told us AI solutions can offer previously unavailable capabilities or increase efficiency and speed in delivering services. To incorporate these capabilities into their operations, we found that agencies are experimenting with a wide range of AI acquisition approaches and pursuing strategic initiatives to improve AI acquisitions government-wide. However, in a landscape with rapidly evolving AI capabilities, markets, and federal policy, it is too soon to tell which approaches will most successfully meet agencies’ requirements under different circumstances, and which initiatives will provide the best return on investment.

Agencies Are Increasingly Adopting Rapidly Evolving AI Capabilities to Support Their Missions

According to the Federal Chief Information Officer, federal agencies more than doubled their use of AI from 2023 to 2024, and congressional and executive branch efforts are underway to further adopt AI capabilities.[34] For example, Congress recently appropriated approximately $1.7 billion for AI efforts across the government.[35] In addition, the White House released America’s AI Action Plan, which lays out plans to accelerate innovation and adoption of AI within federal agencies.[36]

Officials at our five selected agencies told us AI solutions can offer new capabilities or more efficient delivery of services. For example, we found that DHS acquired machine learning and computer vision capabilities to quickly identify areas needing additional attention following natural disasters. We also found that VA acquired natural language processing capabilities to analyze veterans’ survey responses and benefits claims. In another example, GSA established partnerships with leading AI companies—OpenAI, Google, and Anthropic—to establish chatbot capabilities, among others, promising to increase the efficiency of administrative functions. Figure 4 provides illustrative examples of agencies’ use of AI to support their missions.

Commercial developers are continually updating AI capabilities and fine-tuning models for specific uses, including uses at federal agencies, which have ranged from air traffic control to patent application reviews. Although the abundance of solutions and vendors provides federal agencies opportunities to improve their operations, in the past, rapid technological development rendered entire infrastructures or business cases obsolete. For example, a senior official at GSA compared the current AI environment to the abundance of search engines that emerged in the early 2000s. This official explained that agencies should avoid committing to the AI equivalents of AltaVista or Ask Jeeves, when competitors will likely ascend as Google did—becoming clear leaders in their respective fields.

Agencies Are Experimenting with Various Acquisition Approaches as They Adopt AI

Federal agencies generally have great latitude when determining which acquisition approach to use to meet a particular requirement, and that is true for AI acquisitions as well. This is because each acquisition is unique, and what works well under one set of circumstances may not work well under another. Through the end of fiscal year 2025, agencies were continuing to experiment with various acquisition approaches across a wide range of AI capabilities in an effort to effectively incorporate AI into their operations.

Agency-directed vs. vendor-driven. Some agencies seek solutions to meet key mission needs by awarding new contracts to develop or leverage specific AI capabilities. For example, DHS used GSA’s Federal Supply Schedule to acquire generative AI capabilities that could help develop portions of hazard mitigation plans for local communities; in this case, those impacted by natural disasters. We also found that industry sometimes introduces capabilities, some of which are commercial, to agencies to meet their needs in the absence of specific AI requirements. For example, VA awarded a task order off a GSA schedule to acquire a medical software solution with AI capabilities. In another example, GSA acquired a facility and equipment maintenance tracking software that included a chatbot capability for customer service support. GSA officials told us that the vendor offered the chatbot as an added capability and not in response to a defined requirement.

The benefits of an agency-directed approach using typical acquisition planning steps include a deliberate decision-making process that involves AI terms and conditions that reflect agency requirements. However, this process can be lengthy and may slow the adoption of rapidly evolving AI capabilities. Alternatively, under a vendor-driven approach, particularly when commercial offerings are available, agencies can receive upgraded capabilities more rapidly. However, not including AI terms and conditions when using this approach may increase the risk of experiencing unanticipated cost growth and operational challenges, such as “model drift”— decreasing reliability over time.

FAR-based contracts vs. other agreements. We reviewed several AI acquisitions that used different types of FAR-based contracts, including firm-fixed price contracts, to buy licenses for commercial AI solutions. For example, DHS used a firm-fixed price order to obtain a license for facial matching software for use across the department, including to assist with traveler identity verification at airports.

Alternatively, DOD leveraged its authority to use OTAs to develop AI capabilities. In July 2025, DOD awarded OTAs with $200 million ceilings to four leading AI companies to accelerate adoption of advanced AI capabilities across the department.

In addition, the Air Force indicated it used another arrangement known as a partnership intermediary agreement to support the development of a chatbot similar to ChatGPT.[37] In this example, the Air Force partnered with the Wright Brothers Institute to expedite the transfer of technology, and procured expert assistance related to assessing technology capabilities and problem analyses by issuing an order under the agreement.

The potential benefits of using non-FAR-based contracts like OTAs and partnership intermediary agreements can include greater flexibility that may help address concerns from vendors who do not typically do business with the federal government. These concerns may include intellectual property rights, award time frames, and the need to establish government-unique cost accounting systems. However, we previously found that agencies’ use of OTAs carries the risk of reduced accountability and transparency compared to FAR-based contracts.[38] OMB M-25-22 states that agencies may need more transparency rather than less in contracts for AI systems that support “high-impact” use cases. For example, the memorandum states that agencies should determine whether a system is likely to support “high-impact” AI use cases early in the acquisition life cycle, and inform vendors of the transparency and documentation requirements that must be met in solicitations for those systems.

Custom acquisitions vs. leveraging established contracts. Agencies acquired AI capabilities using direct contracts with vendors and agency or government-wide vehicles. For example, the VA awarded a new contract to a single vendor to develop AI capabilities for identifying suicidal ideation indicators in veterans’ survey responses after considering GSA schedule and other offerings in its market research.[39] According to OMB guidance, the custom approach is suitable when mission needs are specific and require a high level of agency involvement during contract performance.

Conversely, agencies can leverage existing agency or government-wide contracts, such as the GSA Federal Supply Schedule, to meet their needs. For example, GSA launched the OneGov Strategy in April 2025 to modernize how agencies can procure and manage information technology and other commodities from a broad range of vendors for government-wide use. According to GSA, the OneGov Strategy ensures transparent pricing, streamlines acquisitions, and improves cybersecurity protections while providing the entire federal government access to leading AI models. As part of this strategy, GSA reported entering into agreements with OpenAI, Anthropic, and Google for government-wide use in August 2025.[40] Similarly, we found DOD entered into agreements with multiple vendors when it established Tradewinds, an acquisition ecosystem meant to deliver emerging technologies across the department.

OMB guidance states that a key benefit of leveraging established contracts is increased efficiency. For example, under the GSA multiple award schedule, agencies can place orders against previously established blanket purchase agreements for which GSA has already negotiated prices for certain products and services. Such an approach can expedite the acquisition process for agencies by streamlining certain procedures. To this end, in July 2025, OMB directed agencies to consolidate procurement through GSA, leveraging the agency’s expertise in addressing prevalent and repetitive needs.[41]

AI as a service vs. as a product. When proactively acquiring AI, agency officials told us that they often acquire the AI capabilities as a service, rather than as a product, and most of the contracts and agreements we assessed as part of our in-depth reviews were for services. For example, the Air Force procured support for its development of generative AI capabilities as a service to provide chatbot support across the department. In addition, agency officials told us licensing agreements for software-as-a-service are frequently used for AI acquisitions. Most of the solutions we reviewed had contracts that included licenses, and most of those licenses were related to software procured as a service.[42] For example, the Army’s Project Linchpin included such license agreements in its contract that establish departmental AI infrastructure and a contract for test and evaluation services. This is consistent with guidance in OMB M-25-22, which recognizes acquired AI as systems or services. OMB guidance also encourages agencies to use Quality Assurance Surveillance Plans to assess both product and service contracts.

Agencies in our sample sometimes purchased software as a product, but AI systems—as defined in federal statute—continuously learn from experience by improving performance when exposed to new data.[43] As such, AI solutions require ongoing assessment to ensure models produce accurate results, which is conceptually different than acquiring products.

Additionally, agency officials who manage some of the most sophisticated AI solutions in our sample asserted that the quality of services vendors provide affects performance outcomes more than the quality of model algorithms (i.e., the core software product). For these reasons, we found that acquiring AI as a service can often be a preferred approach.

Some Agencies Are Pursuing Strategic Initiatives Intended to Improve AI Acquisitions Government-Wide

GSA and NIST—a bureau of the Department of Commerce—are pursuing strategic initiatives focused on improving AI acquisitions government-wide. These initiatives involve efforts to train the workforce on AI and improve evaluations of AI vulnerabilities and threats.

GSA has established a government-wide AI community of practice intended to encourage collaboration among federal agencies, enhance workforce capacities, and support the integration of AI to meet mission needs. GSA holds monthly meetings to inform agency personnel on topics such as federal guidance for acquiring and incorporating AI systems and commercial AI offerings for federal government use.

NIST has established the Center for AI Standards and Innovation to facilitate testing and collaborative research intended to harness and secure the potential of commercial AI systems.[44] As part of its efforts to support safe development of AI systems, the center intends to collaborate with various federal agencies and the private sector to conduct security evaluations and assessments of AI systems, as well as develop methods others can use to evaluate such systems.

Agencies Face Strategic and Programmatic Challenges When Acquiring AI Capabilities

Agencies reported facing strategic and programmatic challenges across the acquisition life cycle. Officials told us the strategic challenges broadly affected their ability to acquire AI solutions across their agencies. They told us the programmatic challenges more narrowly affected the ability of individual programs to procure desired capabilities. Table 2 summarizes challenge areas identified by agency officials.

|

Strategic |

Programmatic |

|

Access to subject matter experts |

Requirements definition and contract terms |

|

Protections for government data and intellectual property rights |

Early testing and continuous evaluation |

|

Traditional acquisition time frames and contract approaches |

Artificial intelligence pricing and overall cost |

Source: GAO analysis of interviews with officials from the General Services Administration and Departments of Commerce, Defense, Homeland Security, and Veterans Affairs. | GAO‑26‑107859

In April 2025, OMB issued guidance to help agencies acquire AI responsibly. OMB directed agencies to update their AI policies to comply with that high-level guidance, which we found corresponds to key acquisition challenges that agency officials identified.

Access to subject matter experts. Officials from each of our five selected agencies told us that limited access to subject matter experts, such as data scientists and software engineers, was a challenge for procuring AI technologies. Some of these officials asserted that subject matter experts are critical for defining requirements, such as data and infrastructure needs, and evaluating vendor proposals. For example, officials with VA’s Automated Decision Support effort told us they struggled to establish effective evaluation factors for source selection due to limited technical expertise. They clarified that having the right technical experts involved at the award phase of the acquisition life cycle is necessary to select vendors with the greatest ability to accelerate benefits delivery to veterans and their families.

Officials supporting other AI efforts told us technical experts can help understand AI risks, establish performance metrics, and evaluate post-award performance. For example, at DHS, officials supporting the Cybersecurity and Infrastructure Security Agency’s Network Anomaly Alerting acquisition stated that they rely on subject matter experts to provide post-award vendor oversight to ensure efficient cyber threat detection. Officials told us that they faced challenges in having access to data scientists and cybersecurity experts. As a result, they reported encountering delays in completing acquisition tasks.

The strategic challenge of limited access to subject matter experts is reflected in OMB’s April 2025 guidance on AI acquisitions. Specifically, OMB M-25-22 directs agencies to convene cross-functional teams of agency officials with relevant expertise, including those with expertise in acquisition, IT, cybersecurity, privacy, confidentiality, civil rights, civil liberties, budgeting, data, legal, program evaluation, and other areas as necessary.[45]

Protections for government data and IP rights. Officials from each of our five selected agencies identified establishing data ownership and IP rights as a challenge to acquiring AI. Some officials leading more established acquisitions stressed that data is paramount for effective AI outcomes, and they asserted that the government should prioritize data ownership over acquiring algorithms. In the past, we found that DOD reported facing challenges related to reduced mission readiness and increased sustainment costs when it did not acquire, or was unable to acquire, necessary data rights for weapons systems.[46]

Officials from the Federal Emergency Management Agency’s (FEMA) Geospatial Damage Assessment solution told us they could not share model outputs with other federal and state partners because they did not obtain certain data rights at the award phase. Officials added that the ability to share such data with their partners post-award would be key for supporting disaster recovery as AI takes a larger role in future acquisitions.

Additionally, officials from the National Geospatial-Intelligence Agency (NGA) Maven program reported challenges determining the appropriate level of IP and data rights needed in the pre-award phase to promote future competition. They indicated a 2-day data rights summit with legal, program, and contracting personnel was held to discuss IP and data rights issues and appropriate contract language. However, following this summit, those officials expressed a continuing need for additional training on IP rights clauses for AI contracts.

OMB M-25-22 generally requires agencies to include contract terms that address IP rights and protect government data. Specifically, the memorandum directs agencies to have appropriate processes that address the use of government data and include appropriate contract terms that delineate the government and contractor’s respective ownership and IP rights. OMB M-25-22 also directs each agency to revisit, and update where necessary, its process for determining data ownership and IP rights in procurements for AI solutions and prioritize standardization across contracts where possible. This direction corresponds to the strategic challenge of protecting government data and IP rights that we found at the agencies we reviewed.

Traditional acquisition time frames and contract approaches. In the past, we found that the use of IDIQ and government-wide contracts can reduce administrative burden and increase efficiencies.[47] Officials from each of our five selected agencies identified traditional acquisition time frames and approaches as poorly aligned with the speed of AI development.

Officials from the Army’s Project Linchpin explained that the traditional approach of developing a system for 5 years, followed by operations and maintenance, does not reflect the realities of AI. Similarly, senior leaders from VA told us that FAR-based contracts can take 2 years to award, explaining that many agencies struggle to move from pilot to production more quickly because of market research requirements and a contract approval process that can take up to a year. They added that, at this pace, agencies are no longer purchasing innovation.

Additionally, officials from FEMA’s Planning Assistance for Resilient Communities acquisition told us they could not meet key deadlines by following the traditional acquisition process to award a new contract for their specific requirements. They determined that using an existing contract was more expedient but limited the specific AI terms and conditions they could include. As a result, they told us they could not require the vendor to perform post-award testing to improve hazard mitigation planning features under the existing contract terms.

OMB M-25-22 directs GSA to develop a plan to release guides that address potential acquisition authorities, approaches, and vehicles for AI procurement, corresponding to the strategic challenge posed by traditional acquisition time frames and contract approaches at the agencies we reviewed. The memorandum also strongly encourages agencies to use performance-based acquisition techniques, such as using statements of objectives or performance work statements instead of traditional statements of work that may introduce limitations.

Requirements definition and contract terms. Officials from all five of our selected agencies identified defining requirements and contract terms as challenges. Some officials explained that well-defined requirements are needed to develop contract terms and performance metrics that allow agencies to meet mission needs, evaluate AI performance, and hold vendors accountable. For example, NGA officials told us that DOD initially funded Maven without well-defined or documented requirements to prioritize acquiring new capabilities as quickly as possible. These officials learned that without clear requirements defined in contracts they could not hold vendors accountable for weekly deliverables needed for Agile development. As a result, they are now adding metrics and model performance requirements before awarding follow-on contracts to better evaluate what vendors deliver against user needs. GAO has previously reported on challenges agencies have faced related to requirements definition and contract terms.[48]

In another example, officials with VA’s Automated Decision Support effort told us that VA’s standard contract language for AI is outdated. Specifically, officials reported that VA’s standard security language was not sufficient for the program’s requirements for protecting veterans’ information in a rapidly changing environment. As a result, officials reported working to develop more comprehensive security terms to meet mission needs. Officials added that it would be helpful to have government-wide, standard contract language to help hold vendors accountable for information security risk.

The programmatic challenge of defining requirements and contract terms is reflected in OMB M-25-22. The memorandum states that the government must communicate clear and specific requirements that make it easy for vendors to offer state-of-the-art AI capabilities to support efficient and effective public services. It strongly encourages agencies to focus on desired performance outcomes to identify requirements and directs agencies to include appropriate contract terms related to privacy, competition, testing requirements, and risk management practices.

Early testing and continuous evaluation. Officials from all five of our selected agencies identified challenges with determining the appropriate methods to test and evaluate AI technologies before and after award phases, citing the diversity and complexity of the systems, and need for robust, continuous testing. For example, NIST officials stated that well-defined, universal AI tests do not exist yet because of the variation and complexity of AI services.

Officials from multiple agencies also highlighted the need for continuous testing to prevent suboptimal or deteriorating AI model performance. For example, GSA officials supporting the USAi effort—a platform that provides agencies access to several generative AI models for chat-based AI and administrative support—told us that to address challenges with evaluating model performance, they are testing leading AI models against a battery of performance tests to evaluate results over time. They added that such testing is critical to be able to determine if new versions of those models justify their often much higher prices. Further, GSA officials told us that “high-impact” use cases also require additional testing and ongoing performance monitoring throughout the acquisition life cycle, which is consistent with OMB guidance.

OMB M-25-22 states that agencies should test proposed AI solutions to understand their capabilities and limitations before awarding contracts and should be able to regularly monitor and evaluate an AI system throughout its acquisition life cycle.[49] OMB M-25-22 also states that agencies should use data they have defined for validation and testing when conducting any independent evaluations. Specifically, the data should not be accessible to the vendor and should be as similar as possible to the data used when the system is deployed. That direction corresponds to the programmatic challenge of early testing and continuous evaluation that we found at the agencies we reviewed.

AI pricing and overall cost. Officials from all five of our selected agencies told us it is challenging to understand AI costs, particularly in rapidly evolving markets. These officials also cited uncertainty related to how vendors price AI-related licenses and services. For example, officials from the Army’s XM-30 AI solution, which provides enhanced targeting for its minimally crewed infantry combat vehicles, stated that AI licensing fees can be exorbitant, citing proposals that included prices for around $300,000 per vehicle per year. That proposal would have resulted in more than $500 million each year just for licensing fees in addition to the cost to acquire the vehicles. Army officials reported that they rejected this proposal, and they are exploring more affordable licensing models for their fleet.

Additionally, officials supporting the Army’s Project Linchpin explained that government buyers often underestimate overall costs because they only consider the cost to train AI models and overlook the infrastructure needed to support AI capabilities over time. These officials explained that programs find it difficult to understand how enterprise-level costs, like cloud infrastructure or computing power, will change in the future because they are more familiar with traditional hardware acquisitions. As a result, agencies may find that AI solutions they acquire may be too expensive to sustain over time.

OMB M-25-22 states that agencies must procure effective and trustworthy AI capabilities in a timely and cost-effective manner, and should include pricing transparency provisions in solicitations, to the extent consistent with other law and policy. The memorandum also states that agencies should perform evaluations to determine the value of the solution to the government throughout the acquisition life cycle. Such evaluations should consider comparative system effectiveness and any ongoing operation and maintenance costs. This direction corresponds to the programmatic challenge of AI pricing and overall cost that we found at the agencies we reviewed.

Knowledge Sharing Can Help Agencies Apply Lessons Learned

OMB M-25-22 states that agencies should share knowledge and resources about AI acquisitions—including tools and best practices, such as language for standard contract clauses—through a web-based repository developed by GSA. However, we found that agencies we reviewed are not consistently prepared to address knowledge sharing. Specifically, the agencies in our scope are not systematically collecting lessons learned from AI acquisitions, including when an acquisition was discontinued.

For example, VA officials told us they decided to retire the SoKAT solution, which supported suicide prevention efforts, in January 2023, after officials determined that it did not improve upon existing solutions enough to justify the additional cost. VA officials supporting that solution told us they did not document lessons learned, which was a missed opportunity because VA has several other AI acquisitions related to suicide prevention among veterans. These acquisitions could have benefitted from lessons learned by the SoKAT solution and helped increase the likelihood that their solutions justified the additional cost.

Similarly, FEMA officials told us they did not document input that they shared with the Geospatial imaging vendor to improve model accuracy for identifying damage caused by natural disasters. Specifically, they did not document that they found the AI model struggled to distinguish between different types of dwellings. Those officials also anticipated accuracy issues would arise when the model assessed imagery from different regions than the imagery used to train it. We found that FEMA recently opted to continue using that vendor for disaster relief purposes for another 12-month period. In the future, other FEMA personnel using the same contract to meet their disaster recovery needs across the country may not benefit from early knowledge gained on how reliably the vendor’s AI system performed.

Table 3 identifies two illustrative examples of AI acquisitions that took steps to address key challenges and identified lessons learned that could also benefit other AI acquisitions.

|

|

Lessons Learned |

|

|

Key challenges identified by agency officials |

DOD’s Maven Acquisition |

GSA’s USAi Acquisition |

|

Access to subject matter experts |

To inform key decisions across the acquisition life cycle, Maven officials reported developing a web of cross-functional teams that include computer scientists, software engineers, test and evaluation officials, cybersecurity experts, and product managers. |

To support the USAi acquisition, GSA officials reported that GSA’s Chief AI Information, Privacy, and Cybersecurity Officers closely coordinated to ensure access to relevant subject matter experts. |

|

Protections for government data and intellectual property rights |

As they executed subsequent contract actions, Maven officials reported developing more specific terms and conditions addressing data ownership and data rights. |

GSA developed a USAi privacy policy and contract language that clearly outline data ownership expectations and limits how vendors can access chat interaction data. |

|

Traditional acquisition time frames and contract approaches |

Maven officials reported using Agile software acquisition approaches, such as weekly planning meetings and 90-day sprints to deliver capabilities to end-users at a relatively rapid pace. |

GSA officials announced partnerships with leading generative AI vendors—including Anthropic, OpenAI, and Google—to create a solution that allows federal agencies to quickly acquire chatbot capabilities through USAi and leverage the cloud infrastructure needed to access the vendors’ models. |

|

Requirements definition and contract terms |

Maven officials reported learning that their early contracts lacked AI-related requirements needed to hold vendors accountable, and they added increasingly defined AI-related requirements in follow-on contracts. |

GSA officials reported reusing contract terms for USAi that were effective in previous AI acquisitions, for example contractual frameworks defining service expectations. |

|

Early testing and continuous evaluation |

Maven officials reported awarding relatively small contracts to several AI vendors to test their solutions prior to committing to larger acquisitions. |

GSA officials reported they are testing multiple AI capabilities through a suite of reliability tests before awarding multi-year USAi contracts. |

|

AI pricing and overall cost |

Maven officials reported studying AI acquisition costs over time to inform a comprehensive cost-estimate that accounts for critical capabilities and sustainment costs. |

GSA officials reported pursuing a usage pricing model for USAi rather than a licensing approach to reduce overall costs, explaining that most employees are not expected to use the capability extensively. In addition, GSA reported using structured pricing to account for complexity of expected model usage so that agencies do not pay more than needed for simple prompts or more sophisticated model features. |

Source: GAO analysis of Department of Defense (DOD) and General Services Administration (GSA) documentation and interviews with agency officials. | GAO‑26‑107859

Agency officials from DOD, DHS, GSA, and VA told us they did not systematically document lessons from their AI acquisition efforts because their departmental policies and guidance do not require them to do so. Our review of the four agencies’ departmental AI policies confirmed that none of the policies include a formal requirement or process to consistently document lessons learned even though some articulate their value.[50] Senior leaders confirmed that the departmental policies in effect as of September 2025 do not require agency officials to systematically document lessons learned from AI acquisitions.

Government-wide guidance from OMB M-25-22 is expected to be implemented at the agency level through departmental policies, per the memorandum. OMB directed agencies to update their AI policies to comply with the requirements in M-25-22 by December 29, 2025. In the past, we reported that agency-level implementation is critical to achieving the advantages of government-wide acquisition improvement efforts directed by OMB.[51] For example, we found that VA had not fully implemented its category management policy in response to OMB guidance, missing opportunities to save billions of dollars.

Without systematic, robust knowledge sharing, federal buyers are more likely to make avoidable mistakes across the acquisition life cycle when they buy AI in the future. Officials from all of our selected agencies told us they had to figure out how to acquire AI on their own, and that having lessons learned from other federal AI buyers to apply would help them achieve better AI acquisition outcomes. They also told us that having agency-vetted terms and conditions to include in contracts would be beneficial.

Conclusions

Federal agencies are navigating a rapidly evolving AI landscape where capabilities, markets, and government-wide acquisition guidance are in flux. These agencies have wide latitude to acquire AI solutions in multiple ways, including as products and services, with new and existing contract vehicles, and through a variety of acquisition approaches. Agency officials face many procurement challenges as they try to determine which acquisition approaches best suit their needs. OMB’s April 2025 guidance is a good starting point to address these challenges, but agencies need to follow through with their own policies. Among other things, this OMB guidance states that agencies should share knowledge and resources through a GSA-developed repository. However, we found DOD, DHS, GSA, and VA were not prepared to meet this provision. This lack of preparation stems from an absence of processes or requirements in agency policies to systematically collect lessons learned from AI acquisitions. Until agencies take action, they are missing opportunities to learn from more established acquisitions and increasing the risk that their future AI procurement efforts will repeat avoidable, and potentially costly, mistakes.

Recommendations for Executive Action

We are making a total of four recommendations: one to DOD, one to DHS, one to GSA, and one to VA. Specifically:

The Secretary of Defense should ensure that the Under Secretary of Defense for Acquisition and Sustainment updates the department’s policies to establish processes and require officials to systematically collect lessons learned from AI acquisitions—including best practices involving contract clauses—and submit them to the GSA-managed repository to enable sharing and application by other agencies. (Recommendation 1)

The Secretary of Homeland Security should ensure that the Under Secretary for Management updates the department’s policies to establish processes and require officials to systematically collect lessons learned from AI acquisitions—including best practices involving contract clauses—and submit them to the GSA-managed repository to enable sharing and application by other agencies. (Recommendation 2)

The Administrator of the General Services Administration should ensure that the Chief Acquisition Officer updates the agency’s policies to establish processes and require officials to systematically collect lessons learned from AI acquisitions—including best practices involving contract clauses—and submit them to the GSA-managed repository to enable sharing and application by other agencies. (Recommendation 3)

The Secretary of Veterans Affairs should ensure that the Principal Executive Director of the Office of Acquisition, Logistics, and Construction updates the department’s policies to establish processes and require officials to systematically collect lessons learned from AI acquisitions—including best practices involving contract clauses—and submit them to the GSA-managed repository to enable sharing and application by other agencies. (Recommendation 4)

Agency Comments

We provided a draft of this report to Commerce, DOD, DHS, GSA, and VA for review and comment. DOD, DHS, GSA, and VA concurred with our four recommendations and identified plans to address them in responses reproduced in appendices II – V. For example, DHS plans to update its departmental guidance, including its current AI procurement process guide, to ensure it contains systematic collection and documentation of lessons learned from AI acquisitions by July 2026. Commerce and DHS also provided technical comments, which we incorporated as appropriate.

We are sending copies of this report to the appropriate congressional committees and the Secretaries of the Departments of Commerce, Defense, Homeland Security, and Veterans Affairs; and the Administrator of the General Services Administration. In addition, the report is available at no charge on the GAO website at https://www.gao.gov.

If you or your staff have any questions about this report, please contact William Russell at RussellW@gao.gov and Candice N. Wright at WrightC@gao.gov. Contact points for our Offices of Congressional Relations and Media Relations may be found on the last page of this report. GAO staff who made key contributions to this report are listed in appendix VI.

William Russell

Director, Contracting and National Security Acquisitions

Candice N. Wright

Director, Science, Technology Assessment, and Analytics

|

Agency |

Selected AI Acquisitions |

|

DHS |

Advanced Network Anomaly Alerting. DHS’s Cybersecurity and Infrastructure Security Agency uses machine learning to provide analytic cybersecurity support and anomaly detection. |

|

Geospatial Damage Assessments. DHS’s Federal Emergency Management Agency (FEMA) uses machine learning and computer vision to analyze structural damage caused by natural disasters based on aerial imagery. In February 2026, DHS officials reported this effort has been retired and is no longer in use at FEMA. |

|

|

Planning Assistance for Resilient Communities. DHS’s FEMA uses generative AI to help develop hazard mitigation plans. In February 2026, DHS officials reported this effort has been retired and is no longer in use at FEMA. |

|

|

TSA PreCheck Touchless Identity Solution. DHS’s Transportation Security Administration (TSA) uses neural networks and computer vision to provide facial comparison for airport security. |

|

|

DOD |

NIPRGPT. The Air Force uses generative AI to provide chatbot support across the department. |

|

Cyclops. The Army uses computer vision to enhance targeting for the XM-30 class of combat vehicles. |

|

|

Project Linchpin. The Army uses AI capabilities such as machine learning, computer vision, and deep learning, as part of its effort to establish departmental AI infrastructure and deliver test and evaluation services. |

|

|

Maven. DOD’s National Geospatial-Intelligence Agency uses machine learning and computer vision to analyze geospatial imagery to identify potential targets for people to assess. |

|

|

GSA |

National Computerized Maintenance Management System Chatbot. GSA uses generative AI to provide facilities-specific support across the department. |

|

USAi. GSA uses generative AI to provide chatbot capabilities across the federal government. |

|

|

VA |

Automated Decision Support. VA’s Veterans Benefits Administration uses machine learning, computer vision, and natural language processing to assess veterans claims benefits. |

|

Persyst Analysis Software. VA’s Veterans Health Administration uses deep learning to provide trend analysis and seizure detection support for veterans. |

|

|

SoKat Suicidal Ideation Engine. VA’s Veterans Health Administration used natural language processing to review veterans’ survey responses for signs of suicidal ideation to alert crisis responders. VA retired this system in January 2023 without entering production. |

Source: GAO analysis of documentation from the General Services Administration (GSA) and Departments of Homeland Security (DHS), Defense (DOD), and Veterans Affairs (VA). | GAO‑26‑107859

GAO Contacts

William Russell, RussellW@gao.gov

Candice N. Wright, WrightC@gao.gov

Staff Acknowledgments

In addition to the contact listed above, Nathan Tranquilli (Assistant Director), Holly Williams (Analyst-in-Charge), Kyra Chan, Sean Manzano, and William Reed made key contributions to this report. Other contributors included Vinayak Balasubramanian, Lorraine Ettaro, Lori Fields, Laura Greifner, Chris Pecora, and Alyssa Weir.

The Government Accountability Office, the audit, evaluation, and investigative arm of Congress, exists to support Congress in meeting its constitutional responsibilities and to help improve the performance and accountability of the federal government for the American people. GAO examines the use of public funds; evaluates federal programs and policies; and provides analyses, recommendations, and other assistance to help Congress make informed oversight, policy, and funding decisions. GAO’s commitment to good government is reflected in its core values of accountability, integrity, and reliability.

Obtaining Copies of GAO Reports and Testimony

The fastest and easiest way to obtain copies of GAO documents at no cost is through our website. Each weekday afternoon, GAO posts on its website newly released reports, testimony, and correspondence. You can also subscribe to GAO’s email updates to receive notification of newly posted products.

Order by Phone

The price of each GAO publication reflects GAO’s actual cost of production and distribution and depends on the number of pages in the publication and whether the publication is printed in color or black and white. Pricing and ordering information is posted on GAO’s website, https://www.gao.gov/ordering.htm.

Place orders by calling (202) 512-6000, toll free (866) 801-7077,

or

TDD (202) 512-2537.

Orders may be paid for using American Express, Discover Card, MasterCard, Visa, check, or money order. Call for additional information.

Connect with GAO

Connect with GAO on X,

LinkedIn, Instagram, and YouTube.

Subscribe to our Email Updates. Listen to our Podcasts.

Visit GAO on the web at https://www.gao.gov.

To Report Fraud, Waste, and Abuse in Federal Programs

Contact FraudNet:

Website: https://www.gao.gov/about/what-gao-does/fraudnet

Automated answering system: (800) 424-5454

Media Relations

Sarah Kaczmarek, Managing Director, Media@gao.gov

Congressional Relations

David A. Powner, Acting Managing Director, CongRel@gao.gov

General Inquiries

[1]For the purposes of this report, we use “model” to refer to the result of an algorithm “trained” on a set of data.

[2]Office of Management and Budget, Driving Efficient Acquisition of Artificial Intelligence in Government, OMB Memorandum M-25-22 (Washington, D.C.: Apr. 3, 2025).

[3]The Office of Management and Budget (OMB) defines “high-impact” as when AI output serves as a principal basis for decisions or actions that have a legal, material, binding, or significant effect on certain interests, including an individual’s civil rights and human safety. See Accelerating Federal Use of AI through Innovation, Governance, and Public Trust, OMB Memorandum M-25-21 (Washington, D.C.: Apr. 3, 2025).

[4]DOD is not required to maintain and publish an AI use case inventory as most executive branch agencies are required to do. However, the department is taking some steps to identify and maintain insight into AI activities across the department.

[5]GAO, Artificial Intelligence: Status of Developing and Acquiring Capabilities for Weapon Systems, GAO‑22‑104765 (Washington, D.C.: Feb.17, 2022); and Artificial Intelligence: An Accountability Framework for Federal Agencies and Other Entities, GAO‑21‑519SP (Washington, D.C.: June 30, 2021).

[6]Nestor Maslej, et al, “The AI Index 2025 Annual Report,” AI Index Steering Committee, Institute for Human-Centered AI, (Stanford, CA: Stanford University, April 2025).

[9]Nestor Maslej, et al, “The AI Index 2025 Annual Report,” AI Index Steering Committee, Institute for Human-Centered AI, (Stanford, CA: Stanford University, April 2025).

[10]GAO, Artificial Intelligence: Generative AI Technologies and Their Commercial Applications, GAO‑24‑106946 (Washington, D.C.: June 20, 2024).

[11]The FAR and Defense FAR Supplement are currently undergoing a complete overhaul called the Revolutionary FAR Overhaul. The initiative began in April 2025 as directed by Executive Order 14275 (Restoring Common Sense to Federal Procurement) to restore the FAR to its statutory roots and remove most non-statutory rules or Executive Order, other than text essential to sound procurement. Exec. Order No. 14,275, 90 Fed. Reg. 16,447 (Apr. 15, 2025). The Revolutionary FAR Overhaul’s rewrite of the FAR, called Phase 1, consisted of issuing model deviated text for the rewritten FAR parts. Starting in December 2025, DOD issued model deviation text for parts of the Defense FAR Supplement to align them with the Revolutionary FAR Overhaul. Phase 2 of the Revolutionary FAR Overhaul is ongoing, with the deviated FAR text going through rulemaking. This report’s reference to the FAR and Defense FAR Supplement will be to the codified legacy FAR and Defense FAR Supplement as our substantive work was conducted prior to the FAR and Defense FAR Supplement deviations taking effect. However, since most federal agencies are no longer observing those provisions, we also describe the model deviation text, as appropriate.

[12]For the definition of a commercial product and commercial service, see FAR 2.101.

[13]Contracting officers can use streamlined solicitation procedures, which can reduce the time needed to solicit offers. See FAR subpart 12.6; FAR subpart 12.2 of the FAR model deviation text. For example, any acquisition that meets the definition of a commercial product or commercial service is generally exempt from the requirement to obtain certified cost or pricing data in order to determine price reasonableness. See FAR 15.403-1; FAR 15.403-2 of the FAR model deviation text.

[14]For more information about GSA’s Federal Supply Schedule program, see FAR subpart 8.4.

[15]Since 1960, GSA has delegated authority to VA to manage health care related schedules.

[16]For more information about procedures for placing orders or establishing blanket purchase agreements against a Federal Supply Schedule contract, see FAR 8.405 and subpart 538.71 deviation text. Blanket purchase agreements and orders placed against a Federal Supply Schedule contract using the procedures of that subpart are considered to be issued using full and open competition. See FAR 8.404(a) and 538.7102-1(a).

[17]See FAR 13.303 (FAR 12.201-1(e)(3) of the model deviation text). The FAR defines a blanket purchase agreement as a method of filling anticipated repetitive needs for supplies or services by establishing charge accounts with qualified sources of supply.

[18]See FAR 16.504-2.

[19]See FAR 16.504(c) and FAR 16.504-3(a)(1). Under a multiple award IDIQ contract, contracting officers generally must provide each awardee a fair opportunity to be considered for each order unless an exemption applies. See FAR 16.505(b)(1) and FAR 16.507.

[20]See FAR subpart 16.3.

[21]See FAR subpart 27.4. Agency supplements to the FAR may provide additional regulations governing licensing and acquisition of contractor intellectual property. See, for example, Defense FAR Supplement subpart 227.71.

[22]Data rights are also determined by whether the item, process, or software is commercial or non-commercial, and the purpose of the data in question.

[23]Exec. Order. No. 14,240, 90 Fed. Reg. 13,671 (Mar. 25, 2025); and Exec. Order. No. 14,271, 90 Fed. Reg. 16,433 (Apr. 15, 2025).

[24]Office of Management and Budget, Consolidating Federal Procurement Activities, OMB Memorandum M-25-31 (Washington, D.C.: July 18, 2025). The memorandum notes that acquisitions with simple requirements that are easy to standardize, are mission agnostic, and require low levels of agency involvement are more suitable to consolidation.

[25]For example, the model deviation text for FAR 8.104(a)(1) states that agencies must use an existing contract or Blanket Purchase Agreement awarded for government-wide use, such as the Federal Supply Schedule, if the Office of Federal Procurement Policy has designated the contract as one that agencies must use unless an exception applies.

[26]Exec. Order No. 14,275, 90 Fed. Reg. 15,621 (Apr. 9, 2025). This effort is also known as the Revolutionary FAR Overhaul.

[27]The White House, Restoring Common Sense to Federal Procurement, (Washington, D.C.: Apr. 15, 2025). Exec. Order No. 14,275, 90 Fed. Reg. 16,447 (Apr. 15, 2025).

[28]Office of Management and Budget, Overhauling the Federal Acquisition Regulation, OMB Memorandum M-25-26 (Washington, D.C.: May 2, 2025).

[29]As of October 1, 2024, DHS’s statutory authority to enter into other transactions has lapsed. In September 2025, DHS officials told us that the agency is actively pursuing legislative relief to restore this authority.

[30]OTAs do not always include default provisions that are ordinarily included in a FAR-based contract, such as clauses which may provide greater oversight into contractors’ costs. Instead, contracting officials must determine what protections to include. For additional information, see GAO, Other Transaction Agreements: Improved Contracting Data Would Help DOD Assess Effectiveness, GAO‑25‑107546 (Washington, D.C.: Sept. 3, 2025); COVID-19 Contracting: Actions Needed to Enhance Transparency and Oversight of Selected Awards, GAO‑21‑501 (Washington, D.C.: July 26, 2021); COVID-19: Critical Vaccine Distribution, Supply Chain, Program Integrity, and Other Challenges Require Focused Federal Attention, GAO‑21‑265 (Washington, D.C.: Jan. 28, 2021); and Transportation Security Administration: After Oversight Lapses, Compliance with Policy Governing Special Authority Has Been Strengthened, GAO‑18‑172 (Washington, D.C.: Dec. 21, 2017).

[31]GAO, Artificial Intelligence: Fully Implementing Key Practices Could Help DHS Ensure Responsible Use for Cybersecurity, GAO‑24‑106246 (Washington, D.C.: Feb. 7, 2024); Artificial Intelligence: Agencies Have Begun Implementation but Need to Complete Key Requirements, GAO‑24‑105980 (Washington, D.C.: Dec. 12, 2023); Facial Recognition Services: Federal Law Enforcement Agencies Should Take Actions to Implement Training, and Policies for Civil Liberties, GAO‑23‑105607 (Washington, D.C.: Sept. 5, 2023); and Artificial Intelligence: DOD Needs Department-Wide Guidance to Inform Acquisitions, GAO‑23‑105850 (Washington, D.C.: June 29, 2023).